And we’re off, the USA leg of the

Integrate conference started today in Building 92 on the Microsoft campus in Redmond.

Check out the recap of the events on

Day 2 and

Day 3 at Integrate 2017 USA.

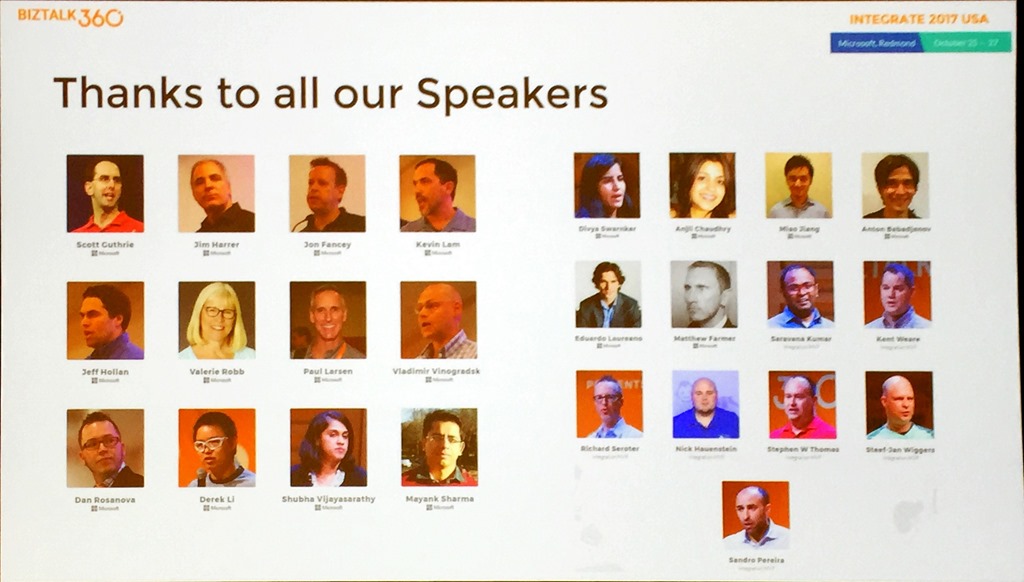

Saravana kicked off proceedings by setting the scene and giving us an indication of who we’re going to see over the next two and a half days.

It’s a good line up with speakers from the Microsoft integration teams and some great community speakers.

There was a shout out for

Integration Monday and Middleware Friday, two awesome community efforts supported by Saravana and BizTalk360.

Saravana was followed by Duncan Barker from BizTalk360 who explained that

BizTalk360 has now grown to 50 people and spoke about

Turbo360 (Formerly known as Serverless360) and how that has grown and continues to be developed.

Duncan also teased about 2 new products that are coming in 2018 so that’s definitely something to look out for and mentioned that work is already underway for Integrate 2018 so watch your mailboxes for more information on that as the plans begin to take shape.

With the introductions and scene setting done, it was time for the leader of the awesome integration team to take the stage.

Jim Harrer – Limitless Possibilities with Azure Integration Services

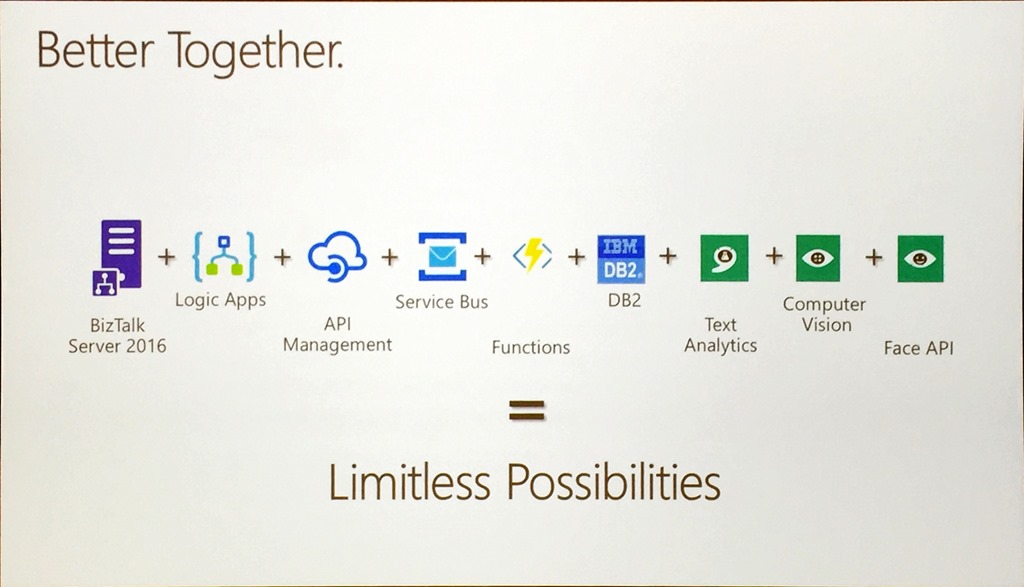

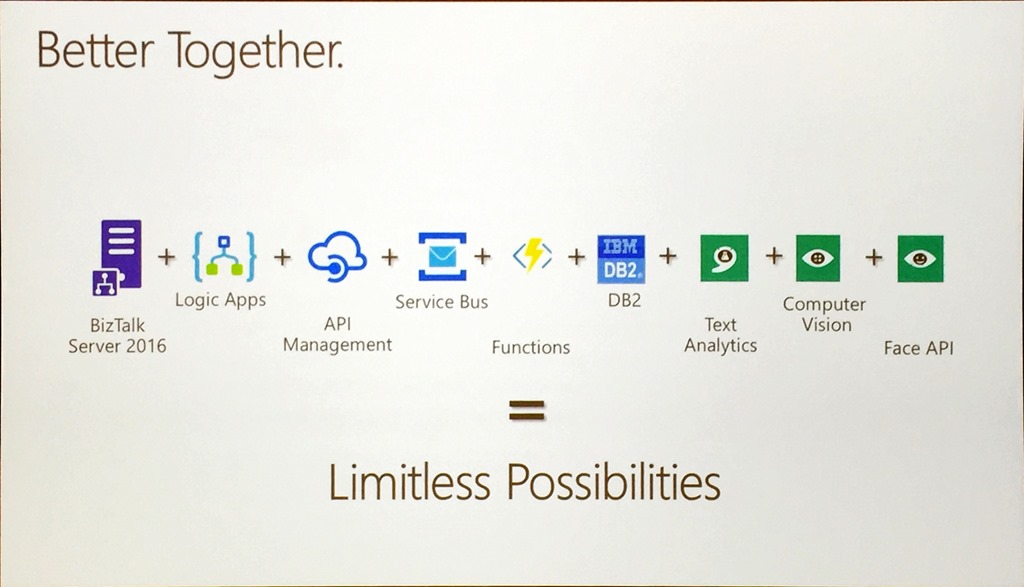

Jim’s message was very much one of integration being the connective tissue that all solutions need to tie things together, reinforcing that there is ongoing investment in BizTalk Server and the story that Logic Apps and BizTalk Server are

Better Together.

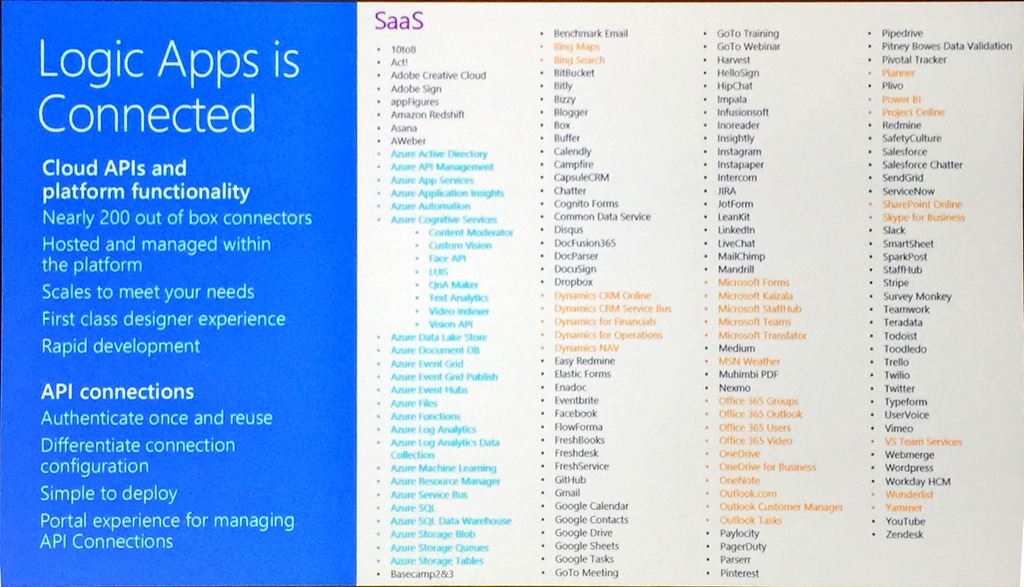

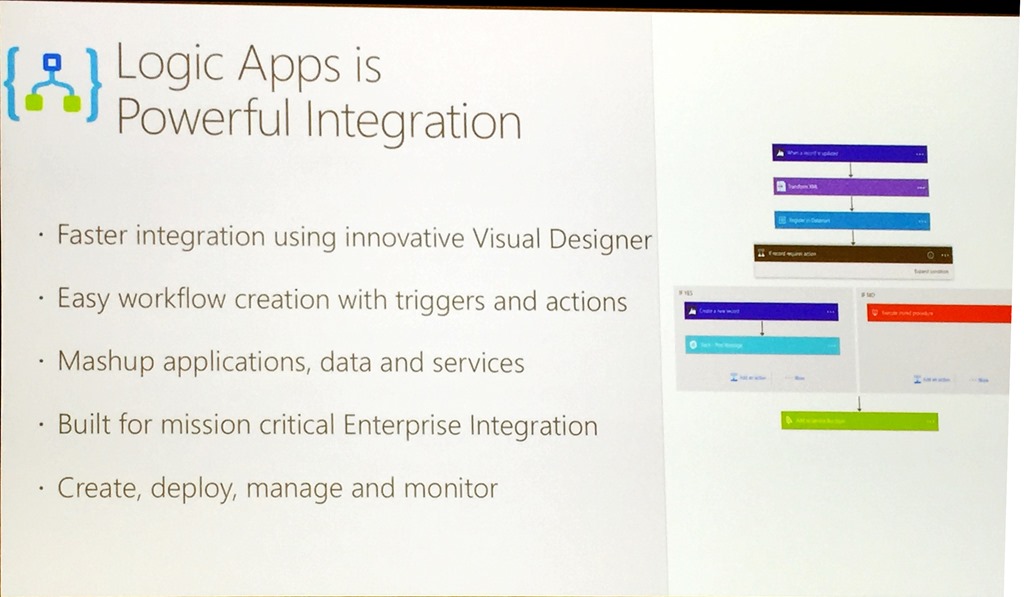

With over 180 connectors now in Logic Apps, including many that integrate directly with Azure Services, it is possible to more effectively build integration solutions that span on-premises and cloud and really accelerate adoption through hybrid integration, and taking an API first approach is a great way to unlock business value.

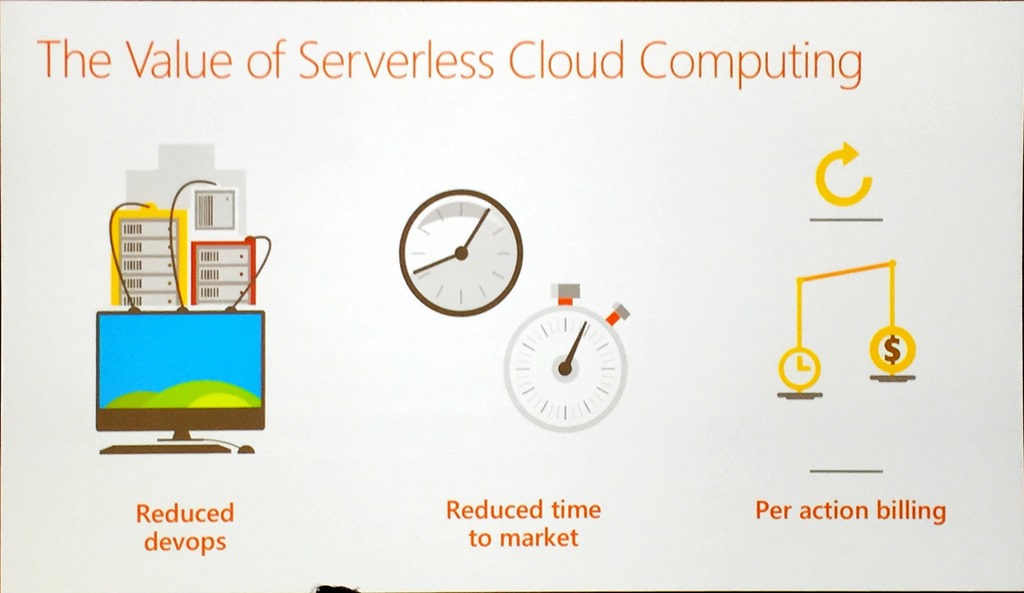

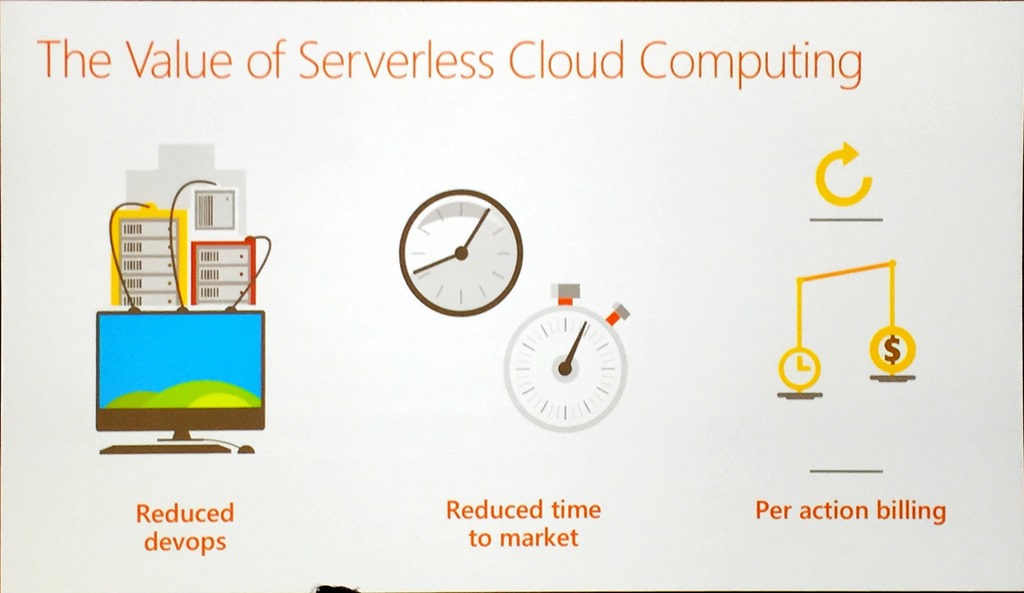

Jim then moved on to serverless, a platform that is just there ready for you to use when you need it.

With serverless, you get improved build and delivery, reduced time to market and per action billing and it really flips traditional development on its head.

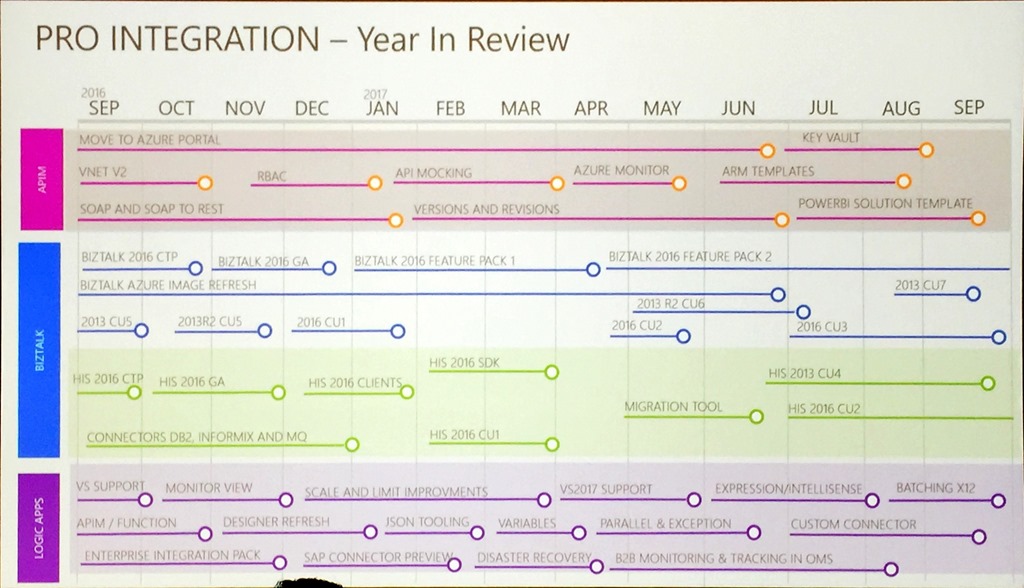

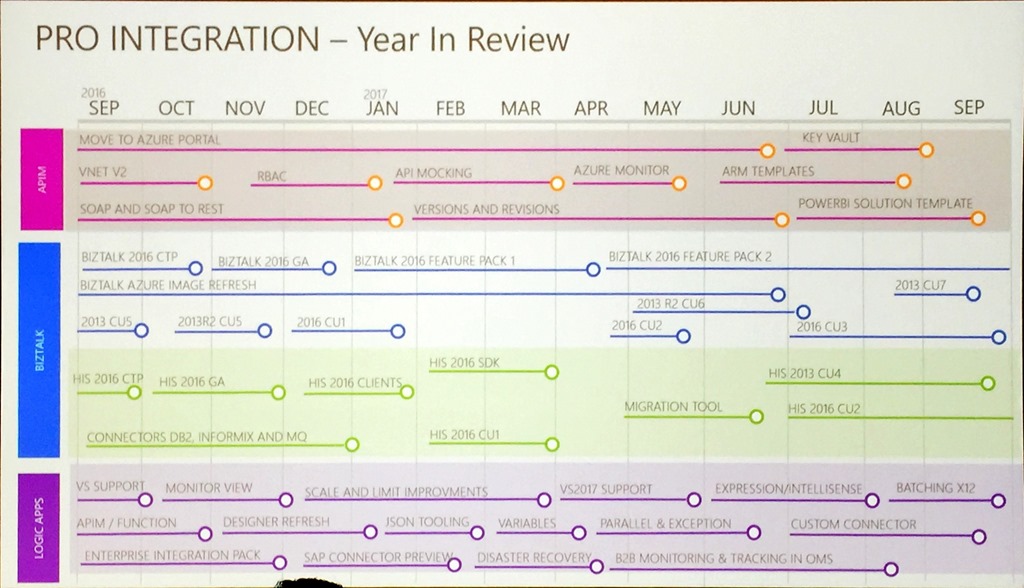

The Pro Integration team has had a busy year, and this was shown in a single slide.

This shows just how quickly things are changing and evolving and has included things like Logic Apps going GA, feature packs being introduced for BizTalk and API Mocking which has allowed teams to be more agile and progress at greater speed, making it possible to deliver integration solutions in weeks rather than months.

This agility has led to integration getting a seat at the table instead of being an afterthought.

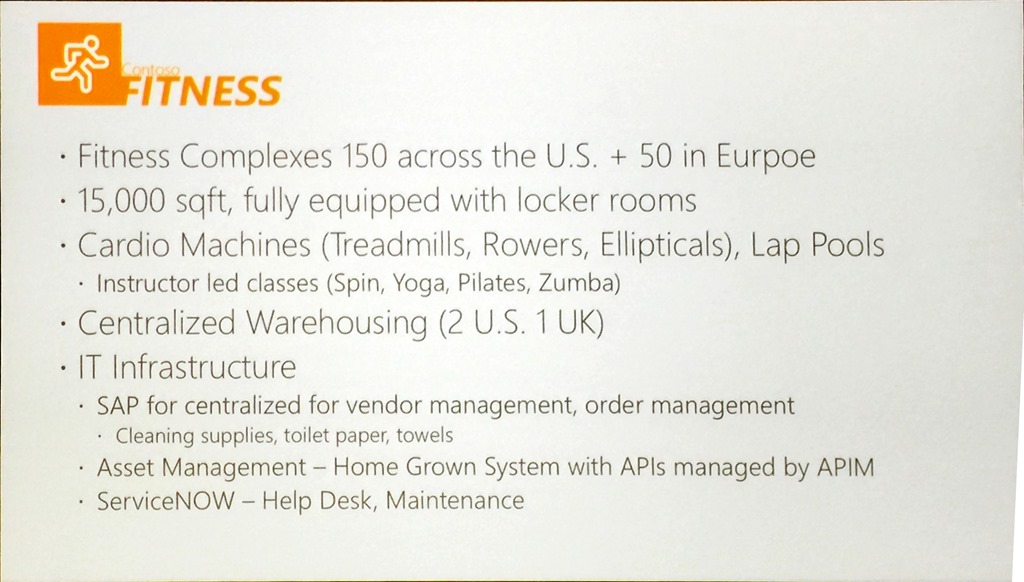

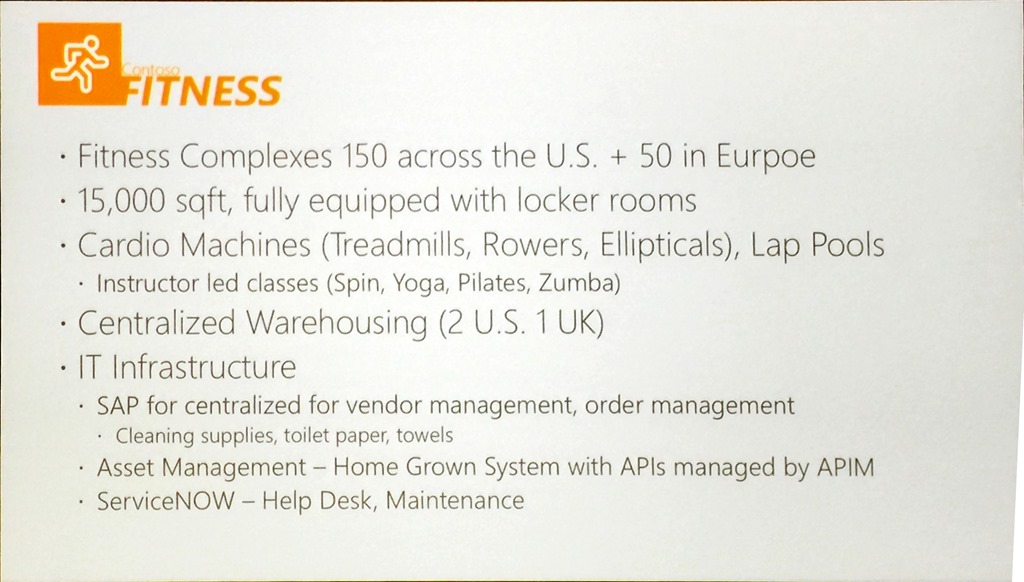

We then had some great demos from Jon Fancey, Kevin Lam and Jeff Hollan who introduced the demo scenario that would be used throughout the conference, Contoso Fitness.

Jon kicked off the demos with a Logic App calling Spotify. This allowed him to show the new Custom Connector and a great resource,

https://apis.guru/browse-apis/.

Kevin followed up looking at Azure Security Center and showed the tooling that was introduced at Microsoft Ignite recently. This provides integration directly between Azure Security Center and Logic Apps, including playbooks that are templates which integrate directly into typical service management tools such as Service Now.

Jeff did the last demo on Logic Apps and Cognitive Services. This showed the power of using the Video Indexer API and the ability to spin up a Docker container through a connector that will be released shortly. This container used FFMPEG, an open source tool, to take the transcript generated by the indexer and apply the information as subtitles in the video.

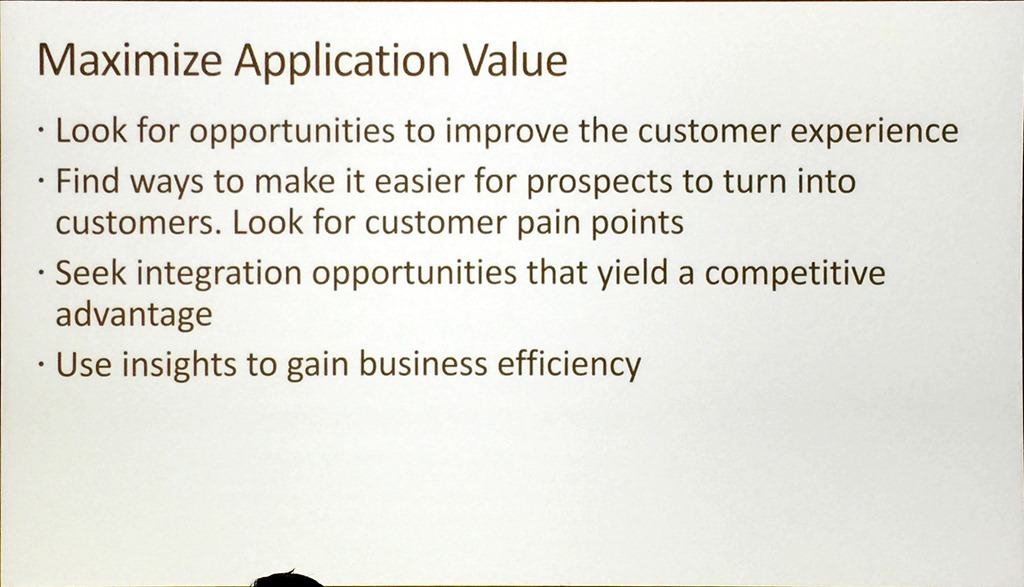

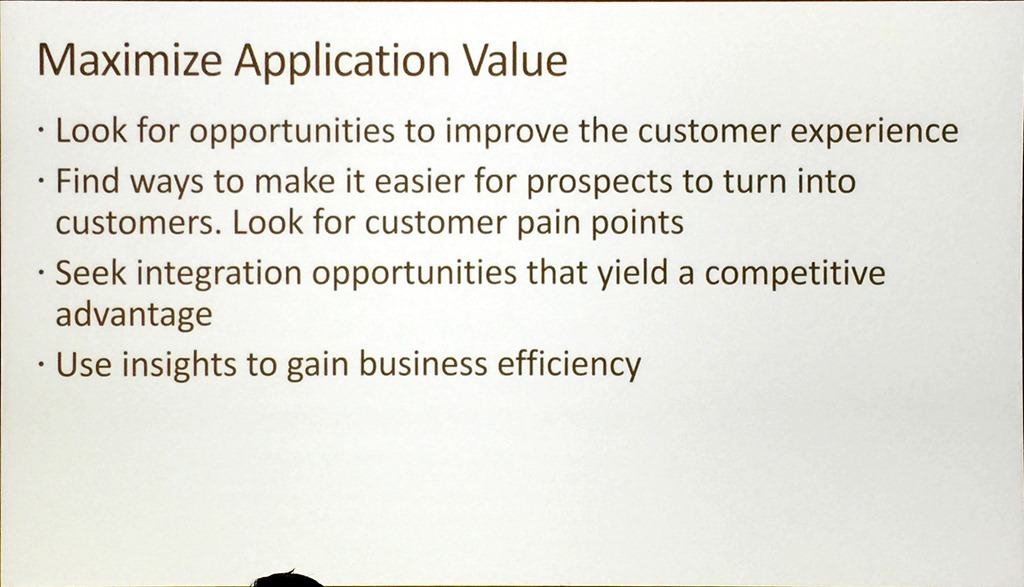

We finished with Jim urging everyone to maximise the value of their projects using integration.

Final message:

“Now is the time for integrators to unlock the impossible”

Paul Larsen – BizTalk – Connecting line-of-business applications across the Enterprise

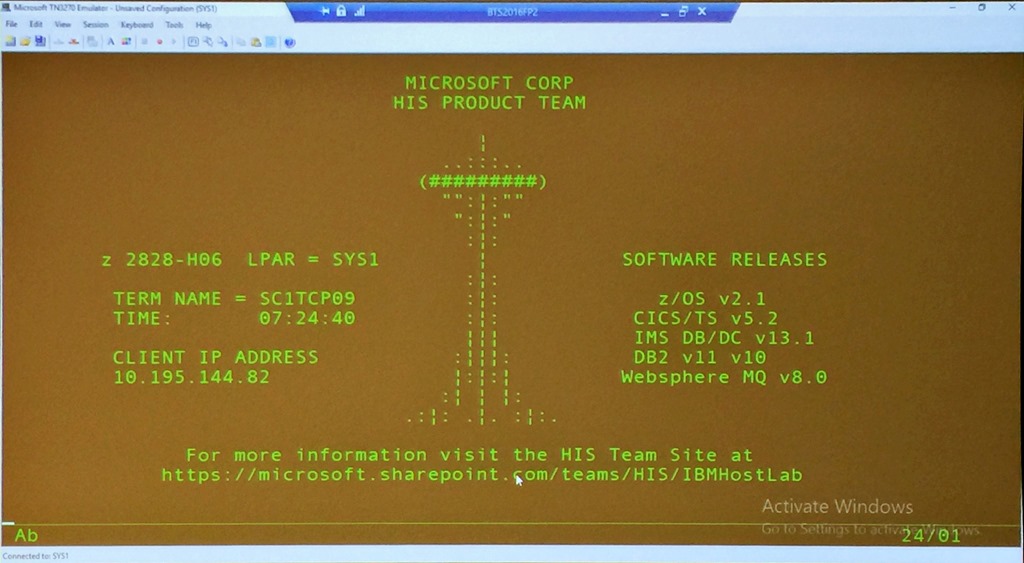

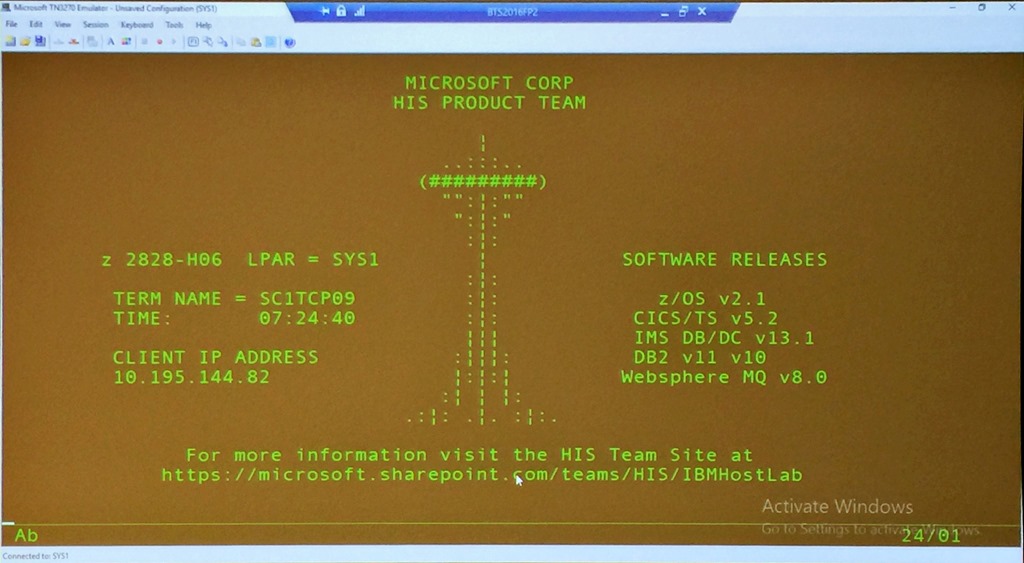

Paul opened his presentation with a great image of a green screen, a mainframe that is running on campus.

This set the scene for a great presentation and dive into BizTalk and heritage systems. Paul insisted on calling them heritage rather than legacy, as heritage is something you celebrate and love whilst legacy has a number of negative connotations!

Paul again emphasized the importance of hybrid integration between BizTalk and the cloud, and the message really started resonating. He spent some time positioning BizTalk and how it had changed along with Host Integration Server over the years he has been on the team.

For me, his demo involving Contoso Fitness showcasing mobile applications, Logic Apps, virtual machines, HL7 and a mainframe was one of the best of the day. It showcased hybrid integration with the Logic Apps adapter, and the real breadth and depth of the Microsoft integration story.

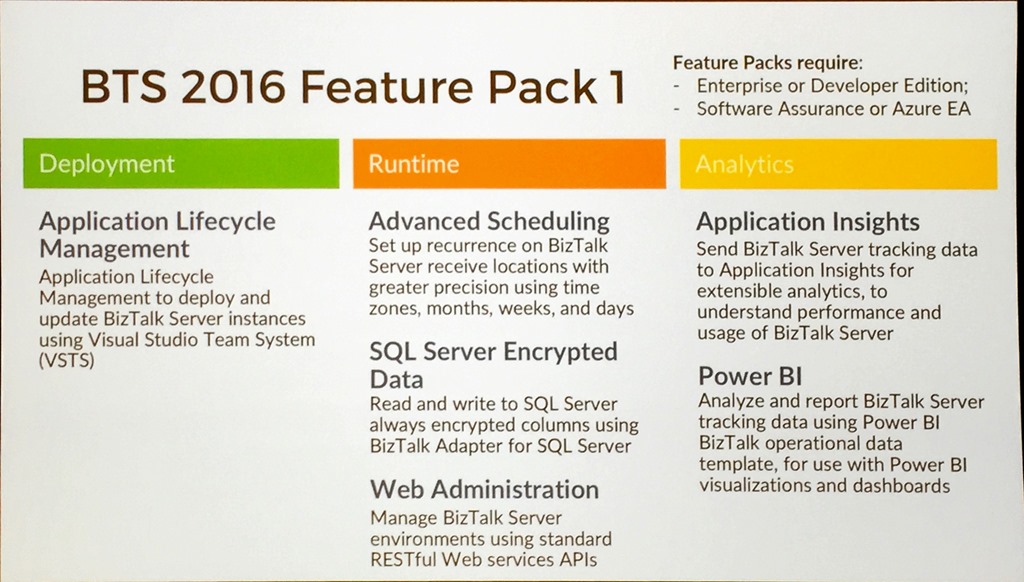

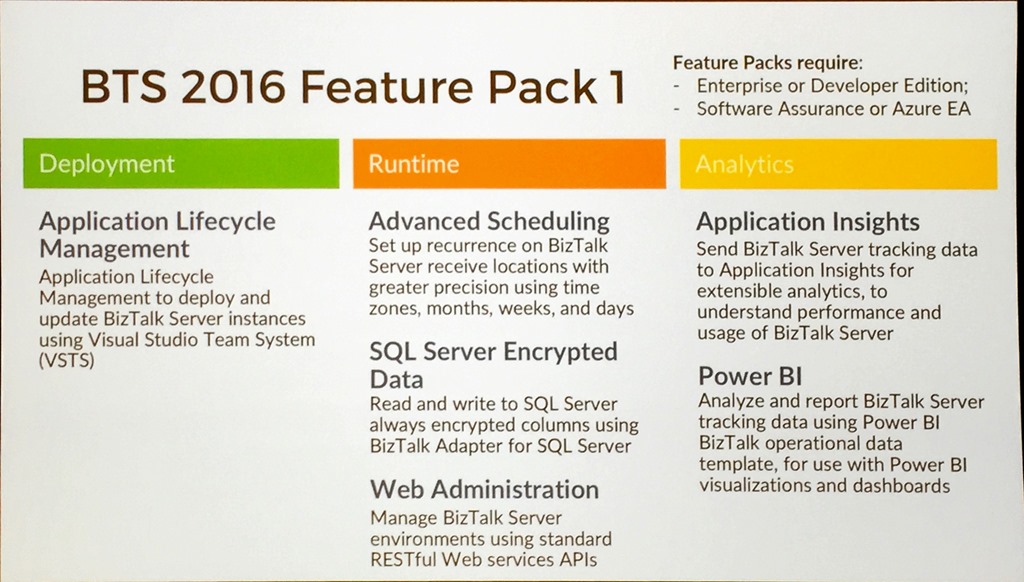

Paul explained the reasoning behind the Feature Pack releases, how it was able to deliver new value at a quicker cadence by introducing non-breaking changes and he reviewed what had been delivered in Feature Pack 1.

The information was split between Deployment – application lifecycle management; Runtime – advanced scheduling, SQL encryption columns and web admin; and Analytics – AppInsights for tracking and the Power BI template.

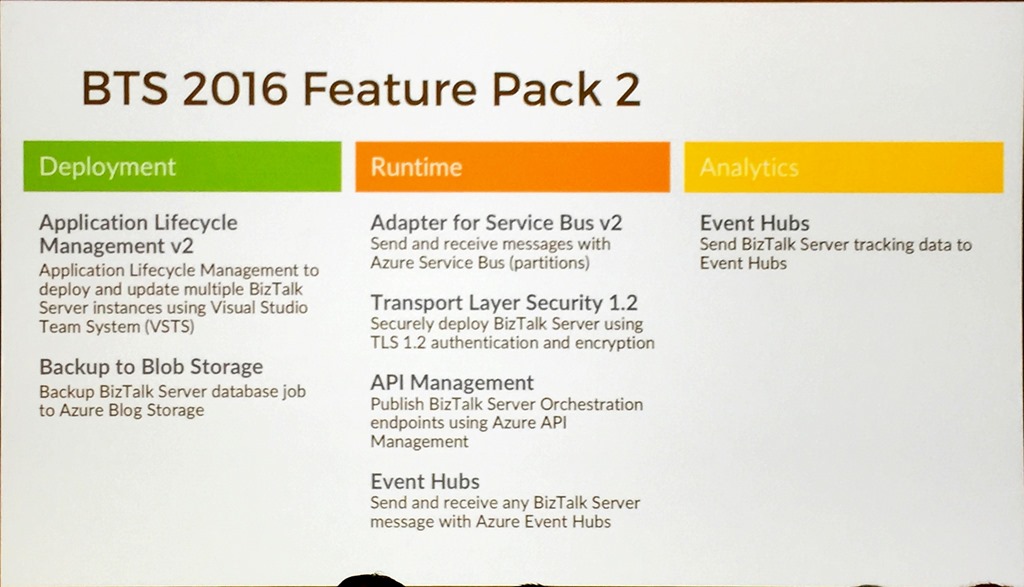

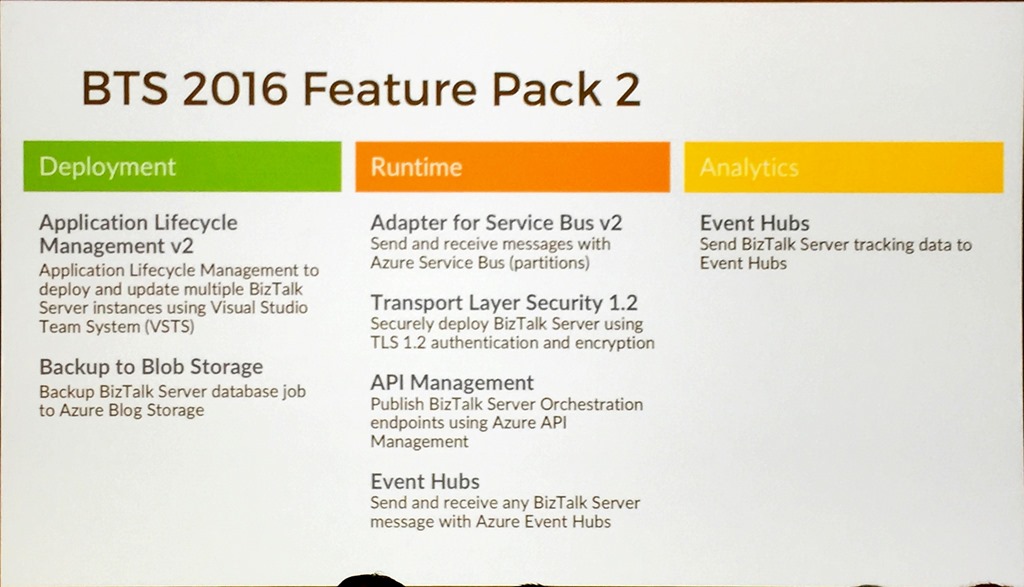

He then mentioned that Feature Pack 2 would be released next month!

Splitting the information the same way we had Deployment – application lifecycle management for multiple servers and backup to Blob Storage; Runtime – Adapter for Service Bus v2, TLS 1.2 (although this may be in the next Cumulative Update as it is a critical update), using API Management to expose Orchestration endpoints, and sending/receiving from Event Hub; Analytics – sending data to Event Hub for tracking.

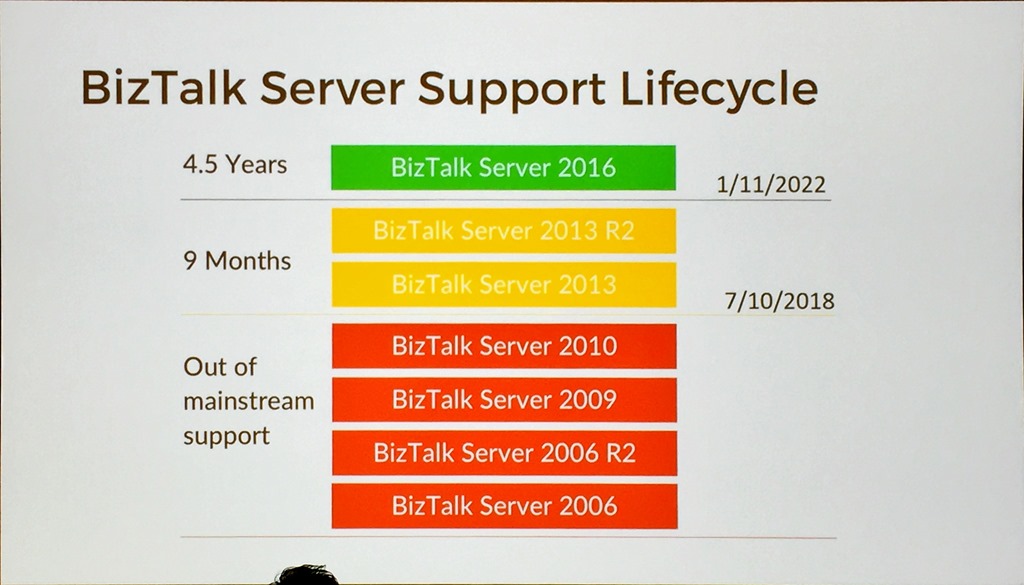

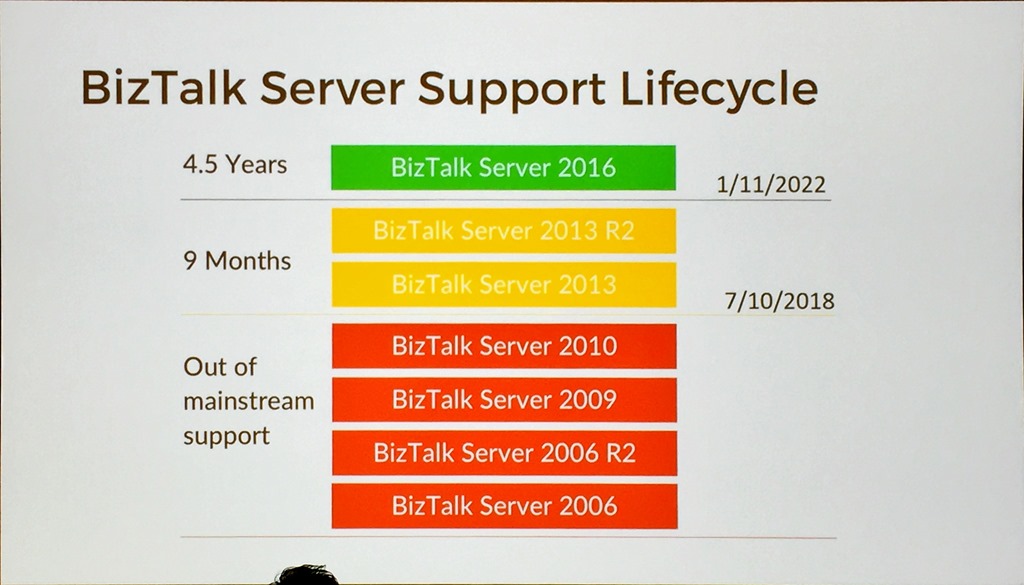

He walked through the BizTalk Server Support Lifecycle.

This shows that BizTalk Server 2013/2013 R2 is out of mainstream support in 9 months and that people should at least starting thinking about migrating. NOTE: A cool tool to help with this migration was presented by Microsoft IT on Day 2 and is available for use.

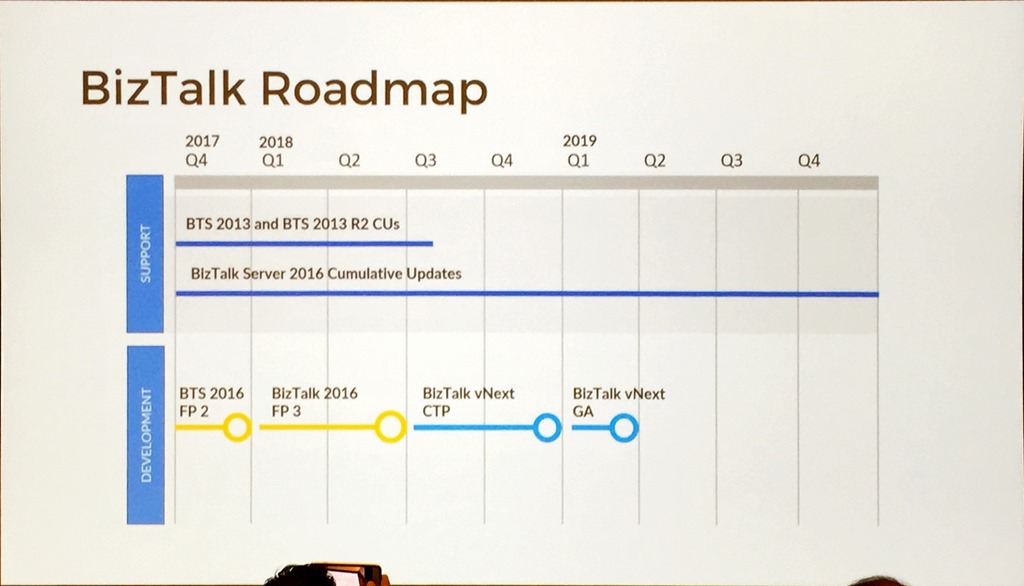

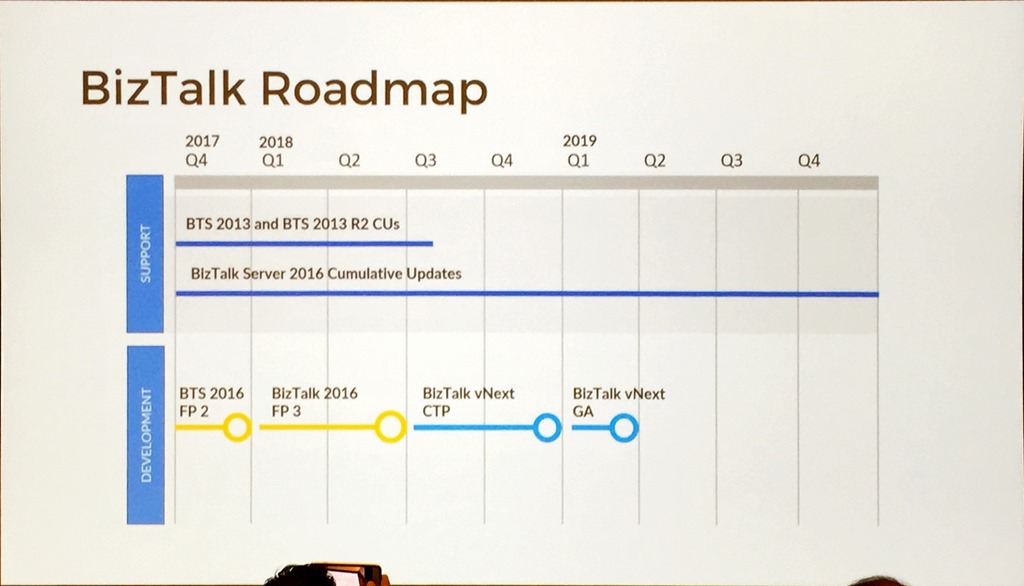

The most important slide was the BizTalk Roadmap.

This clearly shows an ongoing commitment to the product with a timeline for CUs, Feature Packs, and BizTalk vNext.

With that Paul wrapped up we had a break followed by Jeff and Kevin.

Jeff Hollan/Kevin Lam – Azure Logic Apps – build cloud-scale integrations faster

You always know you’re in for a great session when these two stand up, and this session did not disappoint.

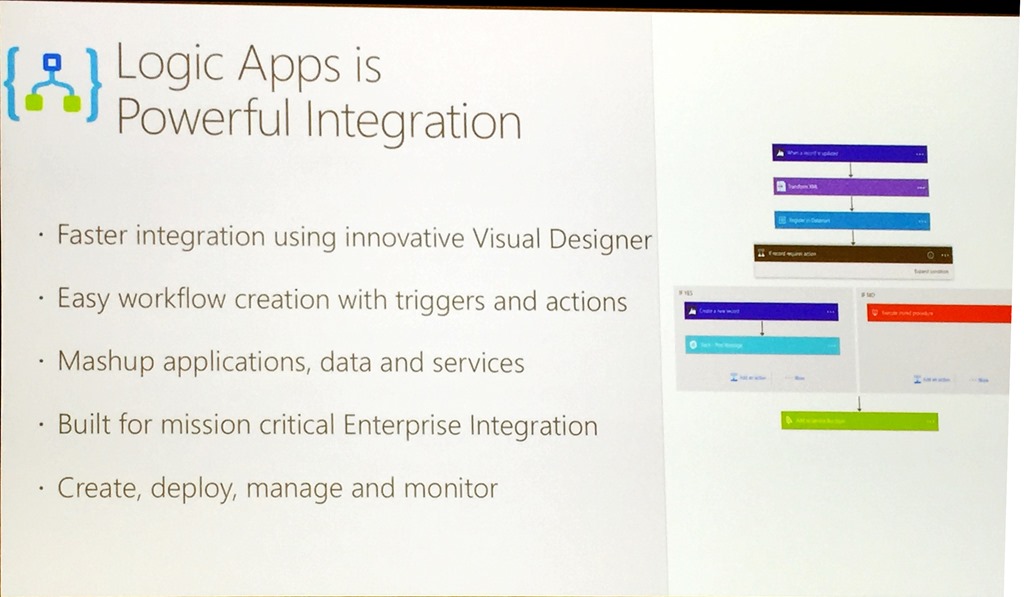

It was aimed a level setting session to get people across Logic Apps, what they are and why you’d use them.

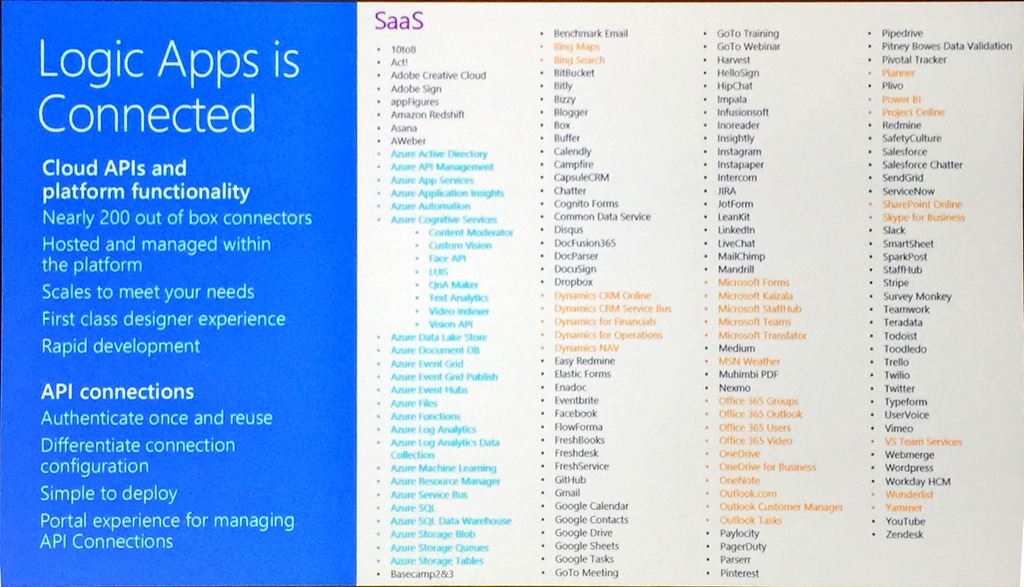

To help emphasize the growth of the service, Kevin mentioned that at GA in June 2016 there were about two dozen connectors, now there are nearly 200!

Connectors provide a canonical form for integration that scale to meets the needs of the customer.

A slide was shown that had an animation of the current connectors that went on for a few pages and included colours to indicate connectors to Azure Services (blue) and those to other Microsoft services (orange), along with a list of others really showing how much coverage Logic Apps has.

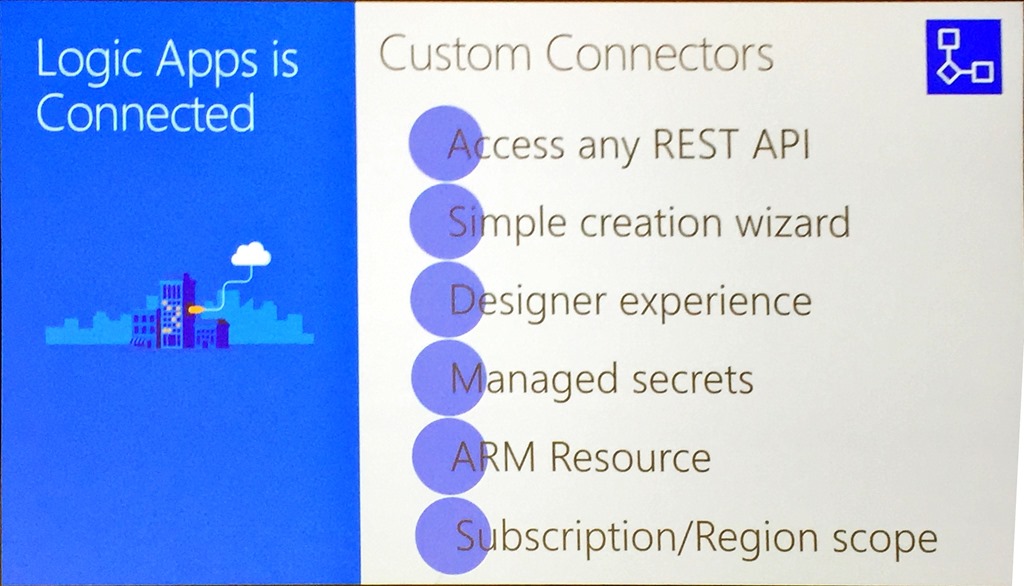

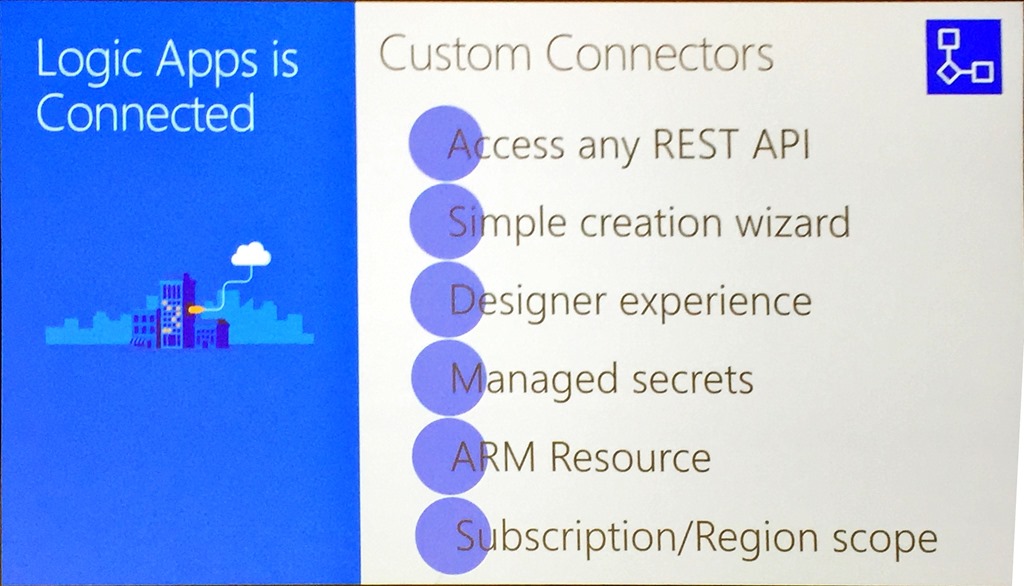

One of the new features was shown – custom connectors.

Custom connectors are available now and treated just the same as any other connector, including storing secrets in the Logic Apps secret store just like regular connectors.

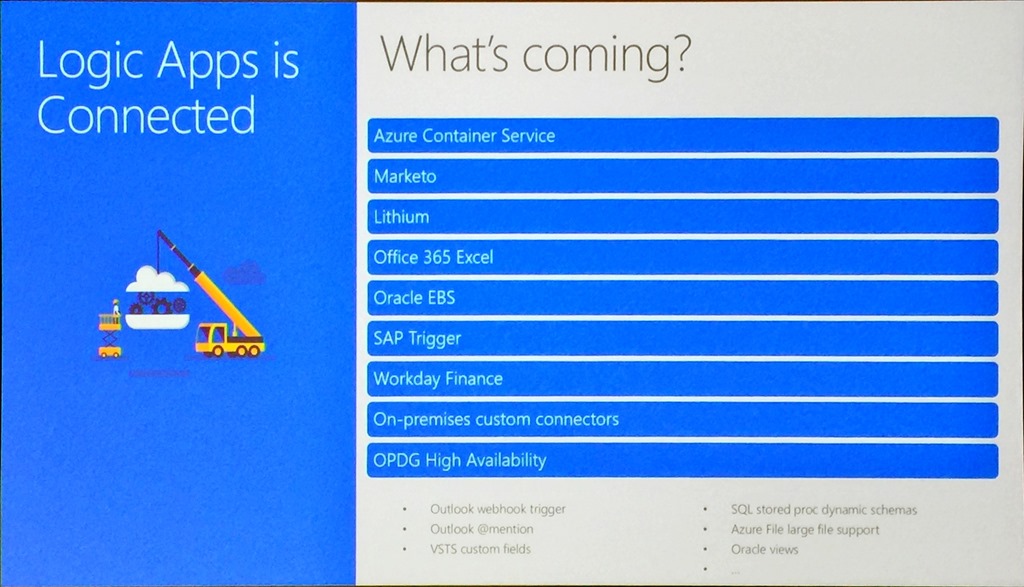

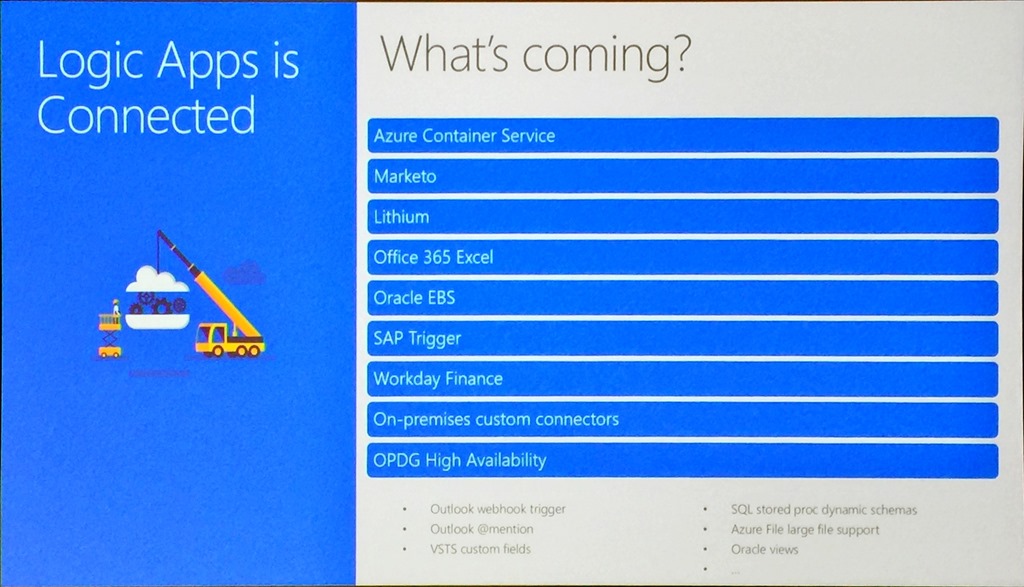

These conferences are great on their own, but when the teams share what’s next and any roadmap information it is particularly interesting. With that, we were teased with what connectors and services are coming soon.

These include the ability to initialize and destroy containers within the Azure Container Service, Oracle EBS and high availability for the on-premises data gateway. I am particularly interested in the container story and can see this as a great way of running transient compute workloads easily and only when required.

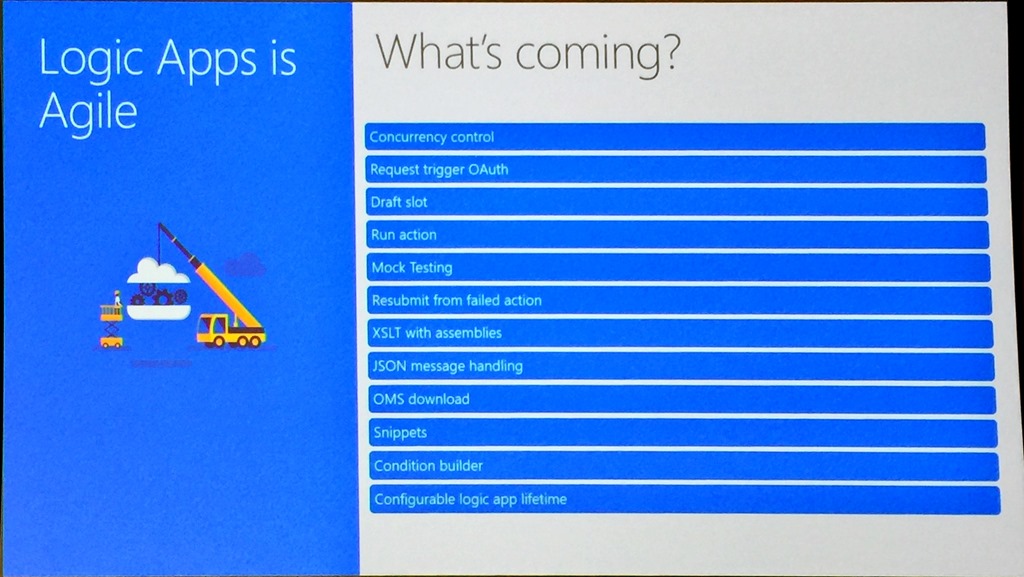

We then moved on to more level setting and to how agile the Logic Apps team is, highlighted by a slide that showed what they have shipped this year, including Visual Studio tooling, nested foreach loops and Ludicrous Mode that allows sharding across the infrastructure to improve performance. Currently, the cadence is roughly a release every two weeks!

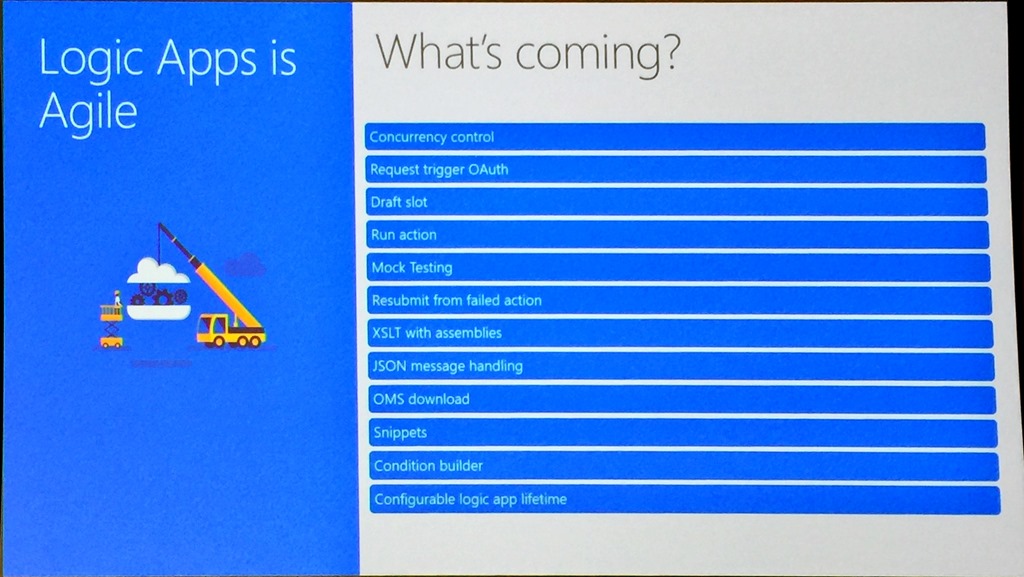

To highlight this agility, even more, they showed what was coming soon to the service.

Particularly interesting is mocking testing to allow you to stub out connectors that are still being built, being able to resubmit from a failed action rather than an entire run, concurrency control to allow control of how parallel foreach loops run which can be important in ordered delivery scenarios and snippets which allow you to create some reusability across your Logic Apps.

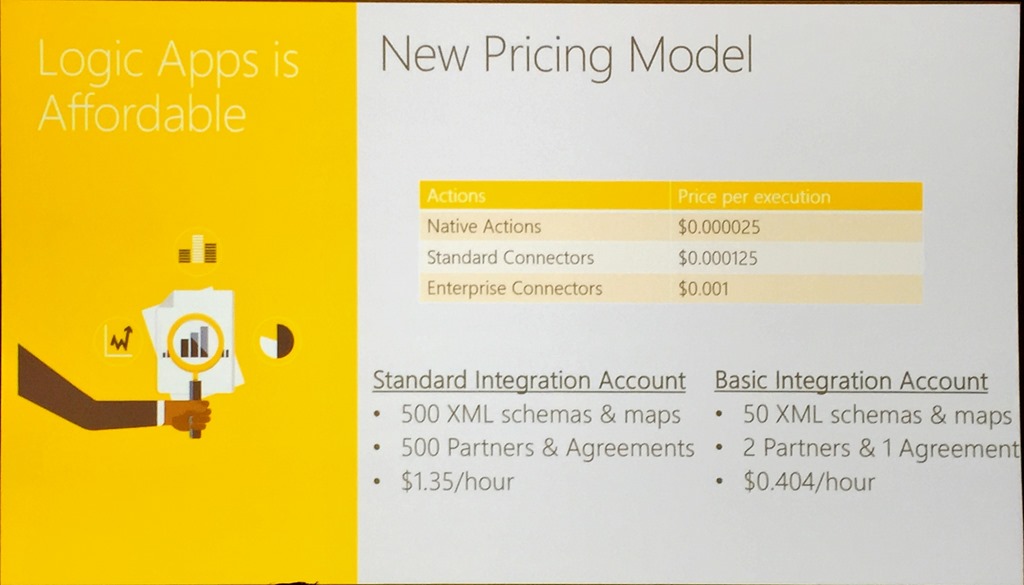

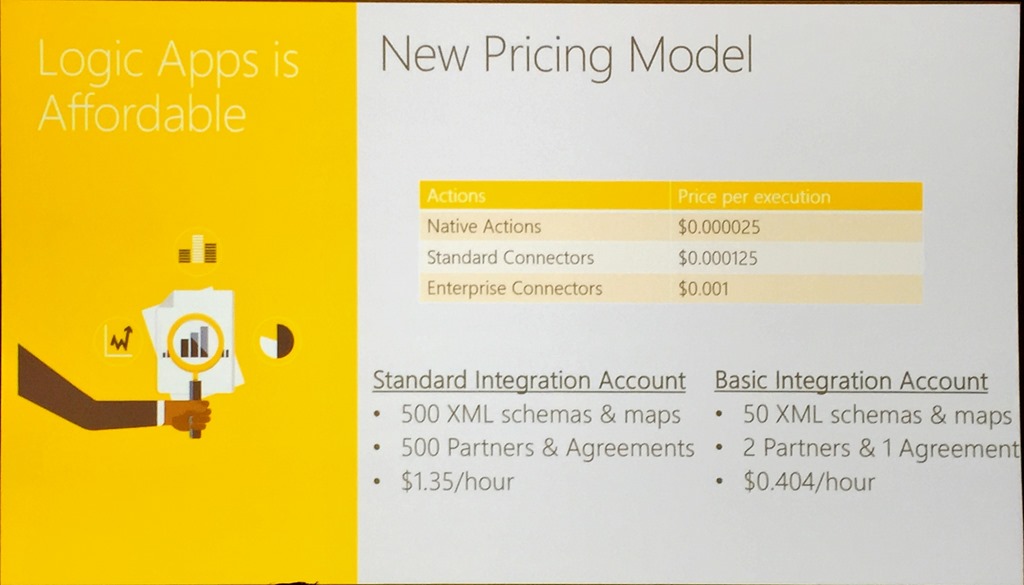

The new pricing model that comes into effect on 1st November was shown. This has a 32x reduction in the cost of native actions, 6.5x reduction in the cost of standard connectors and bringing enterprise connectors inline with other connects based on pay per execution.

The pricing changes also applied to integration account with them coming to a third of their previous price.

With that Jeff wrapped up with another great demo for Contoso Fitness showing how to integrate a Flic button to emulate a customer pushing a button on a fitness machine when it needed maintenance or cleaning, sending an alert via an HTTP trigger to ServiceNow.

We then had a change in presentation order, with Vlad and Miao covering API Management.

Vladimir Vinogradsky/Miao Jiang – Bolster your digital transformation with Azure API Management

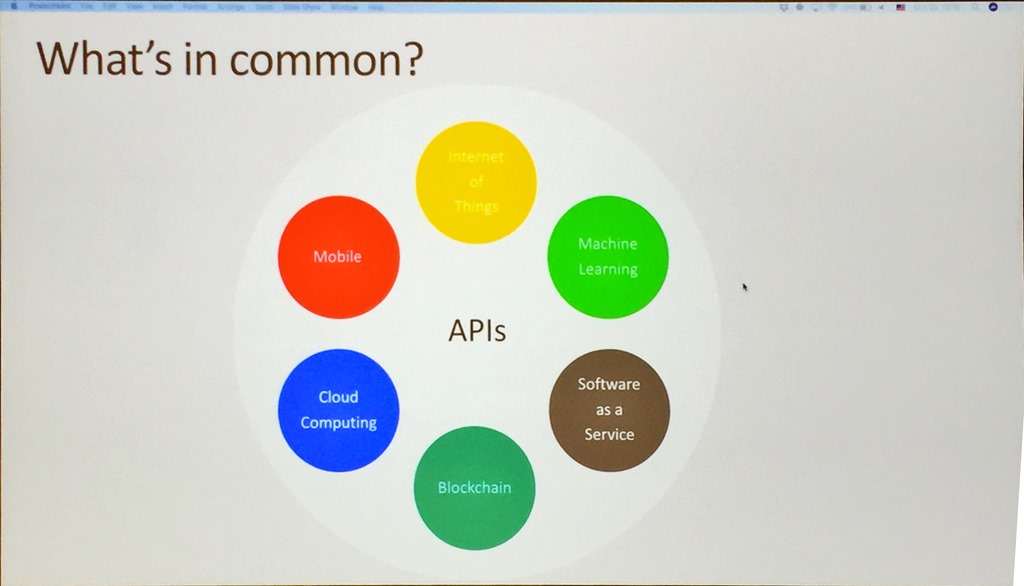

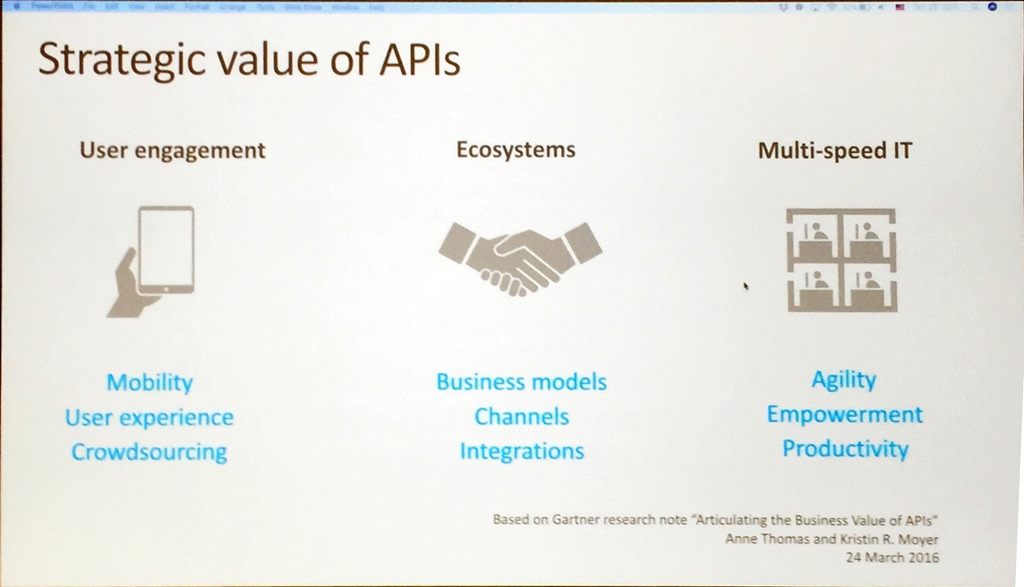

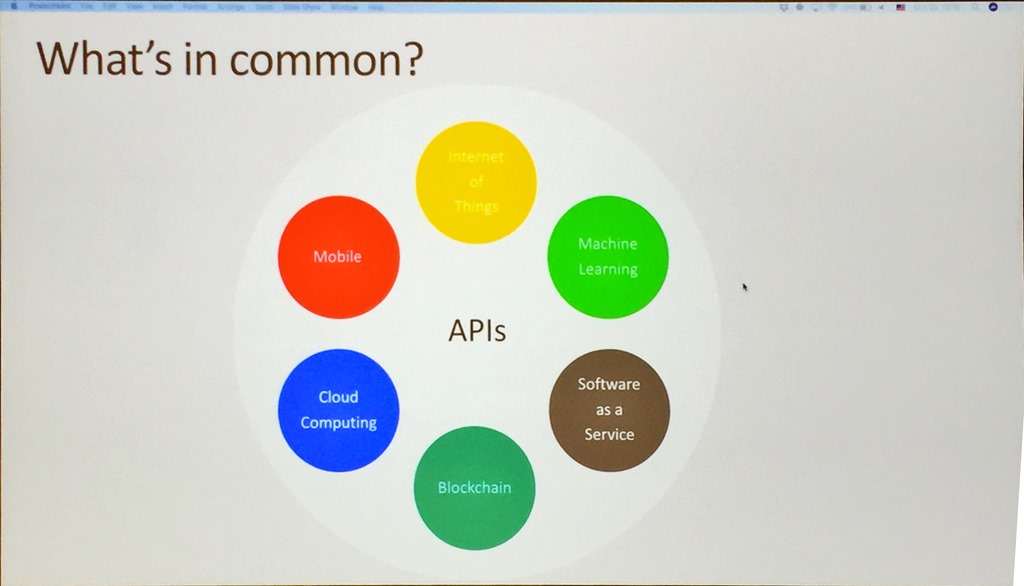

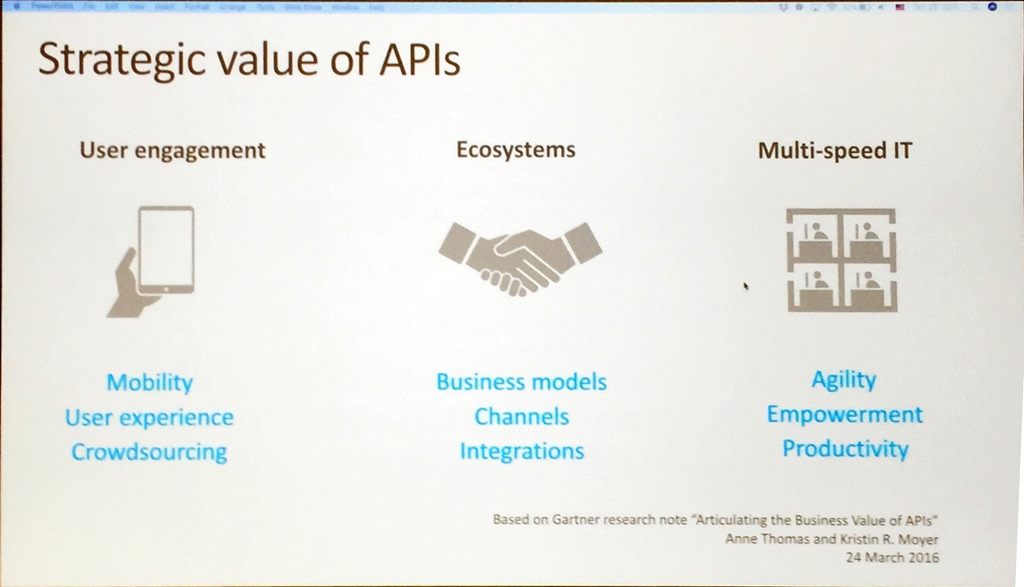

Vlad provided a great overview of API Management and showed how APIs, in general, is really the common component of any solution, whether that is a Software as a Service product or the Internet of Things.

He continued by explaining how API Management is positioned and how it can be used to drive loyalty, build new services and channels to market and how it can help cope with multi-speed IT where not every part of a solution or business wants the same pace of change.

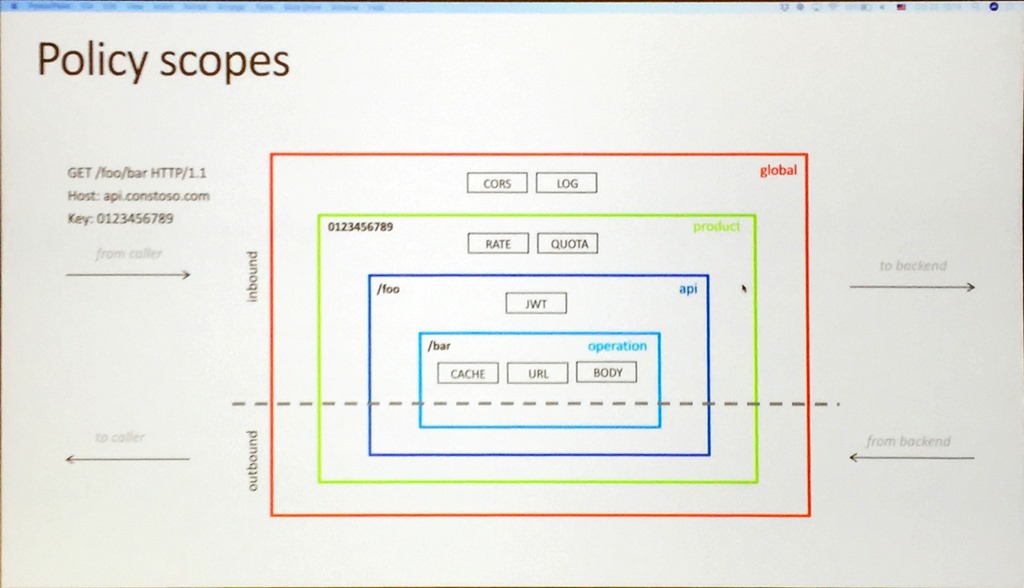

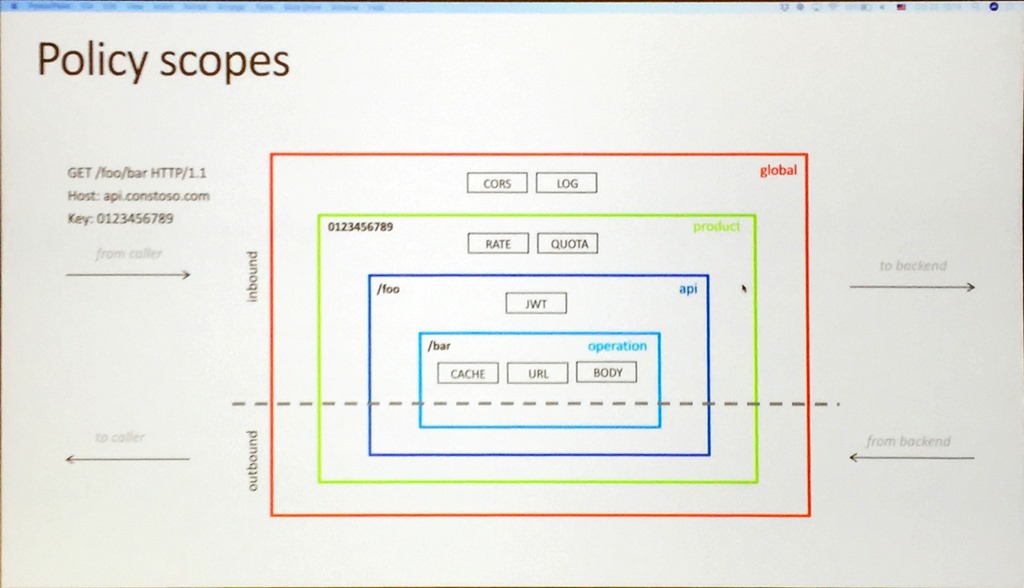

Vlad continued with a general overview of policies and how to use them to enforce certain things like access control and rate limits, and how you can chain them together by explaining the scope and the cumulative nature of policies.

After a discussion about security, the conversation moved on to the inclusion of VNets to help control access to on-premises APIs and then multi-region support and scaling that is available as a premium feature. This allows

you to deploy units of scale across regions, includes request caching out of the box, allows incremental growth of APIs, and allows different scales in different regions. It is a great way to grow your APIs as your business grows.

Miao then did a great demo, showing the key features of the service, firstly showing how to create an API, including SOAP to REST to allow more modern access to heritage APIs.

Using the Developer Portal to allow testing of the APIs he showed how to apply a number of policies such as removing headers, replacing backend URLs and rate limiting, followed by using the tracing feature to gain insight into the information passed to and from an API call and what policies are applied.

Any enterprise solution requires in-depth insight, so Miao moved on to monitoring and using Metrics in the Azure Portal to set alerts and using it to call a Logic App followed by the Diagnostic settings and Log Searching.

We then moved to looking at the new

Power BI template that can be deployed with a single click.

This looks like a great way of delivering insight into an API Management deployment and has been created based on customer asks. It uses Event Hubs, Stream Analytics, and SQL Database.

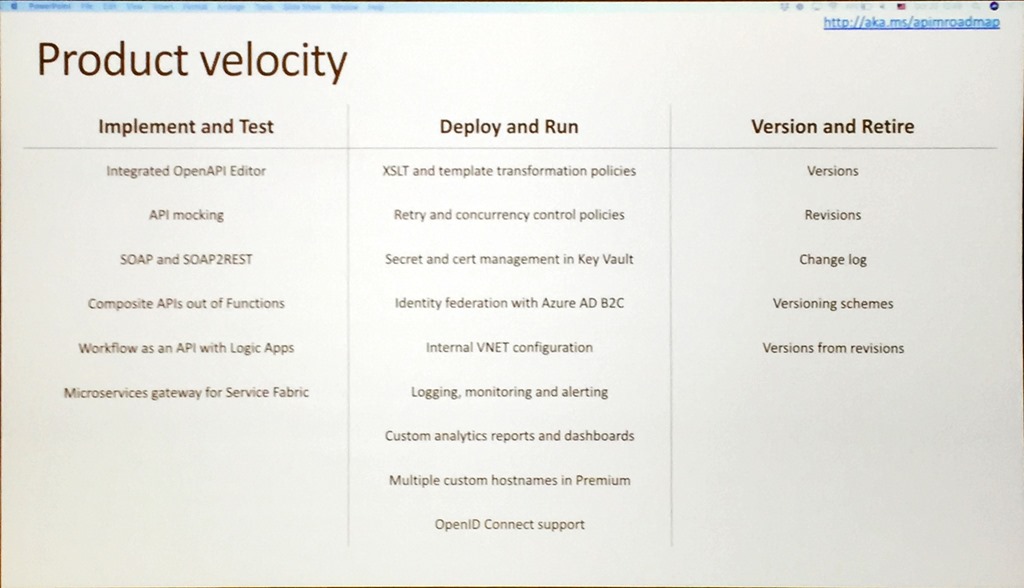

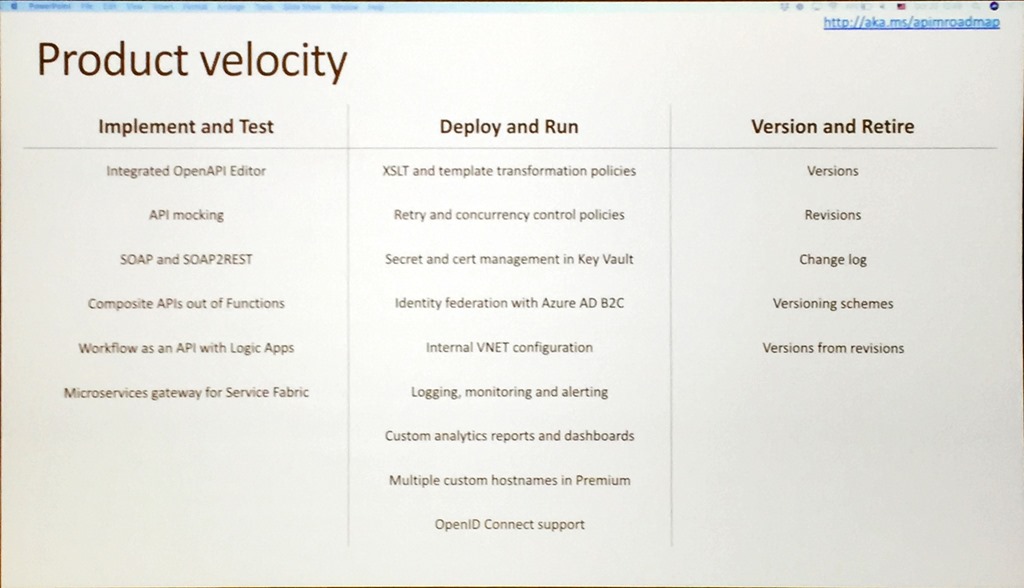

After a slide that showed the growth in API Management, Vlad then showed how much work has been done in the last 12 months.

Like the other presentations, this shows just how agile and engaged the team is and how they are really delivering value to us as users of their service.

With that Vlad provided a list of resources and closed out the morning session.

After lunch, we had 3 presentations on the messaging services within Azure that took proceeding up to the afternoon break.

Dan Rosanova – Messaging yesterday, today and tomorrow

After lunch, Dan kicked off sessions about the messaging services in Azure starting with his own presentation about tools and how Microsoft is really a tools company.

Using a hammer for illustration, Dan gave a great presentation on where a hammer is a right tool and where a hammer is not. This included an unusual demo that showed how to open a beer with a hammer live on stage!

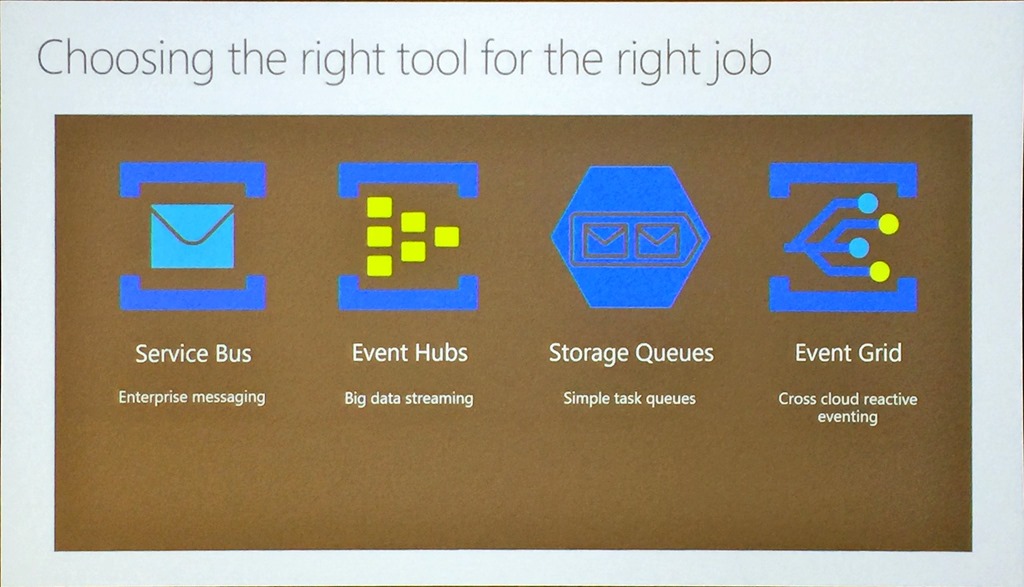

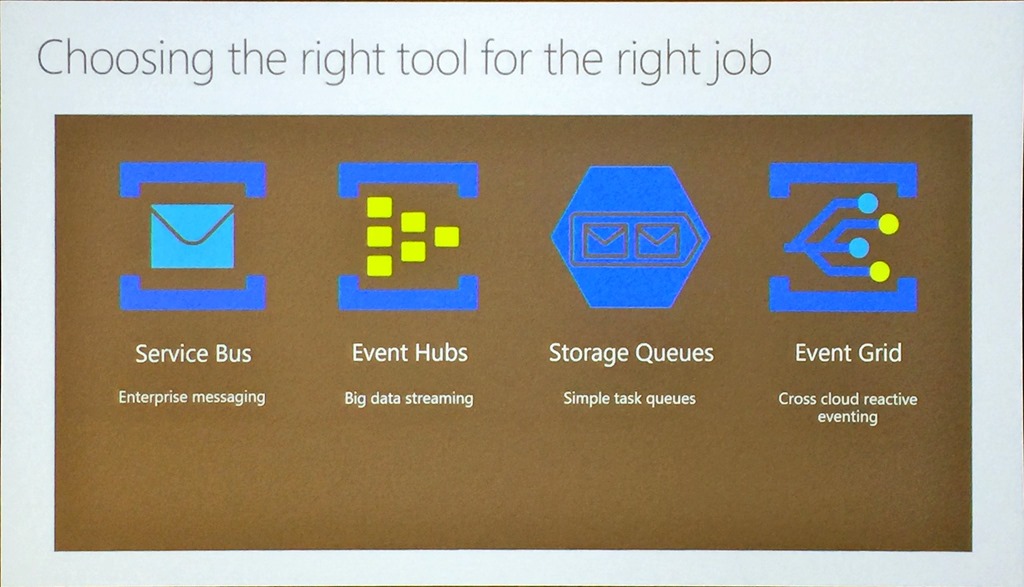

And really that was the main thrust of the presentation, that with Azure messaging being such a large set of tools, it is important to choose the right tool for the job.

To further hammer home the point, he talked about 3 scenarios to fit these tools:

- Task Queue using a Storage Queue to coordinate simple tasks across compute

- Big data streaming using Event Hub to flow and process data and telemetry in real-time

- Enterprise Messaging using Service Bus to manage business process state transitions

- Eventing using Event Grid to provide a reactive programming model

Dan summed up by saying that Event Grid will be GAed soon and indicated that some new services outside Azure are coming.

Shubha Vijayasarathy – Azure Event Hubs: the world’s most widely used telemetry service

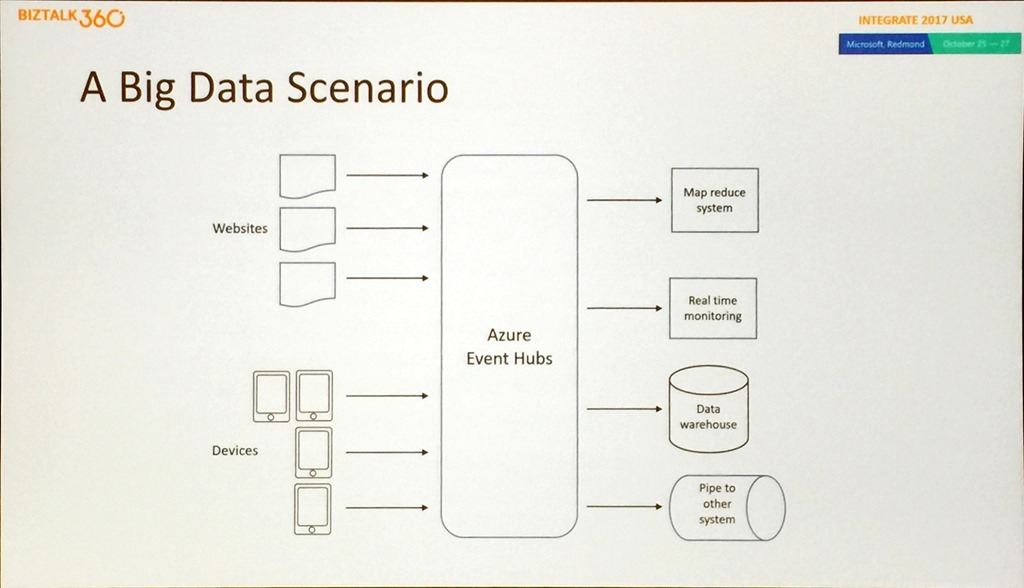

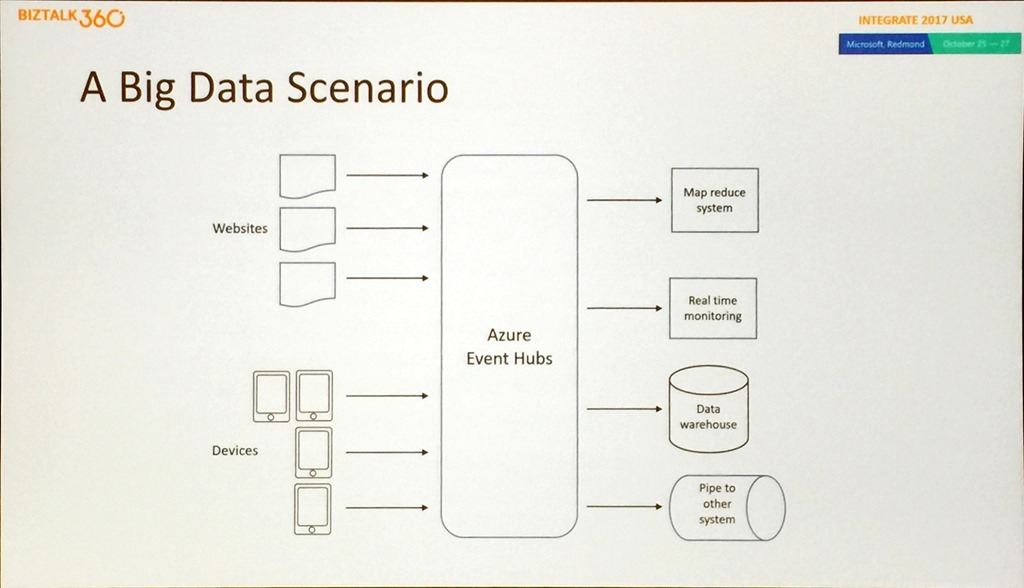

Shubha set the scene using a big data scenario and how Event Hub can be used to provide a single service solution to common problems around telemetry and data pipelines.

She moved on to how Event Hub answers all the typical questions asked about big data solutions, such as how do you handle data that has velocity, volume, and variety, can you deal with regional disasters and do real-time streaming as well as batch capture, what can Event Hub integrate with, and how can you handle support. Again, for any production solution, it is important to be able to lift the covers and see what is happening and how a solution is performing.

Shubha did a great demo showing how to use Event Hubs and Event Grid to move stream data into SQL Datawarehouse using the Capture feature of Event Hub that allows you to persist the telemetry data into a storage account. This demo used an Azure Function to react to an Event Grid event that was fired due to a storage file being created to process data into SQL DW.

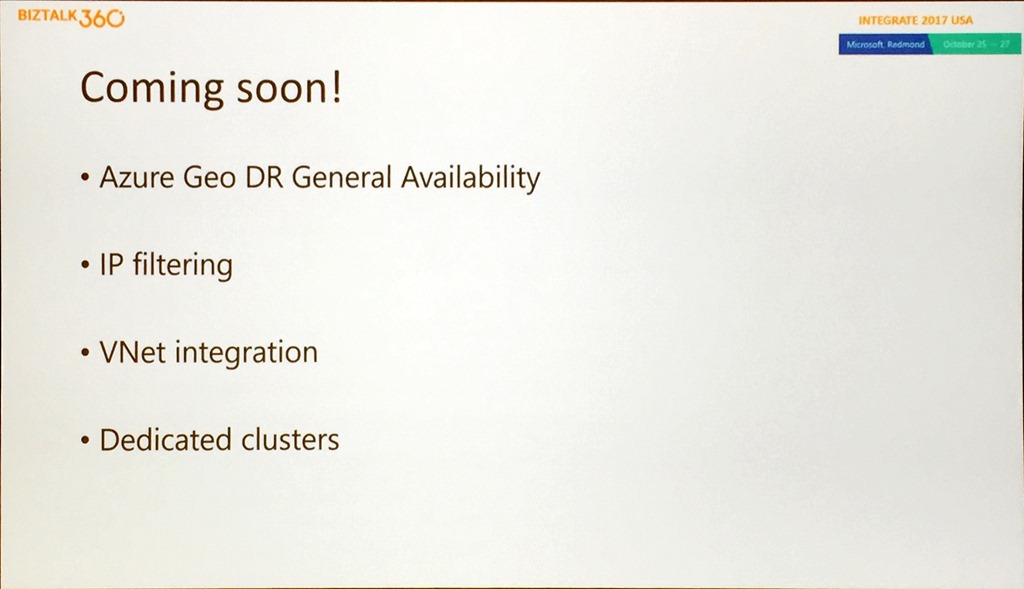

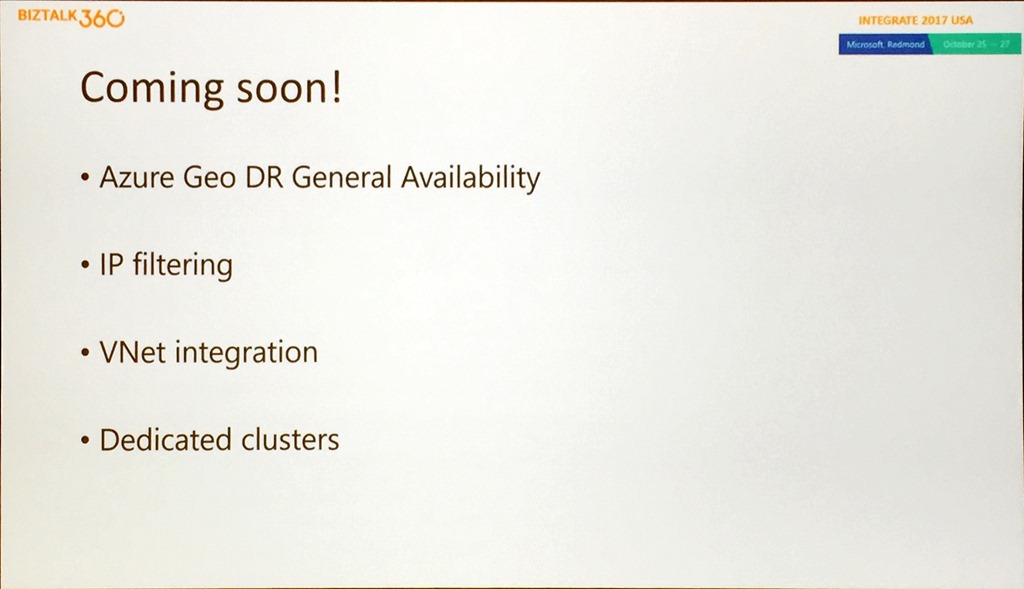

Leaving the best until last Shubha gave some indication of what was coming soon from the team.

This includes the general availability of Geo DR, IP filtering and VNet support and a portal experience for creating dedicated clusters.

We then had a bonus session for the day that was not scheduled.

Christian Wolf – Azure Service Bus: Who doesn’t know it?

So Dan covered the messaging services available, Shubha covered Event Hubs and Christian on to cover one of the oldest services in Azure.

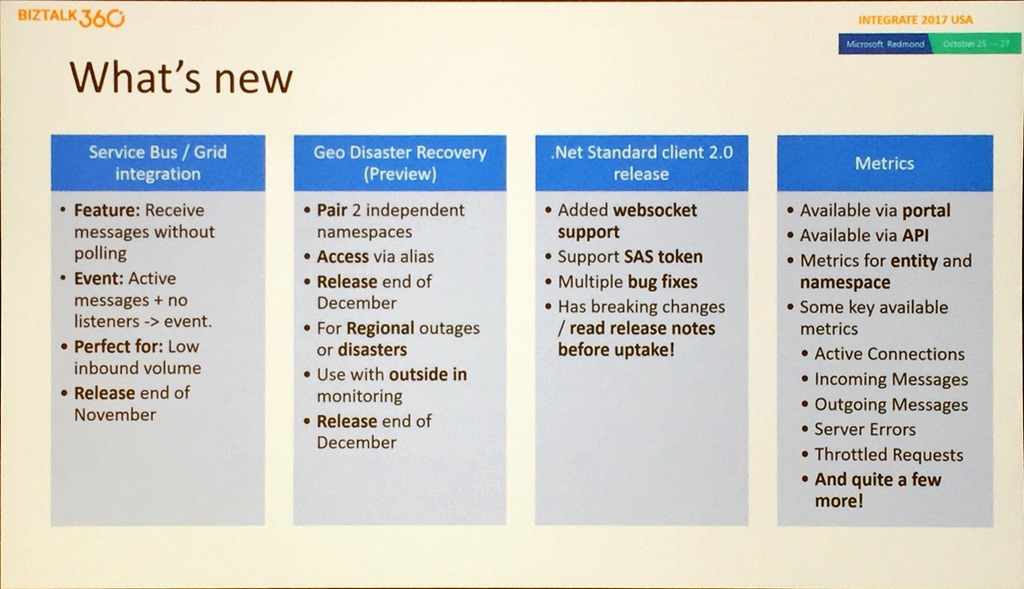

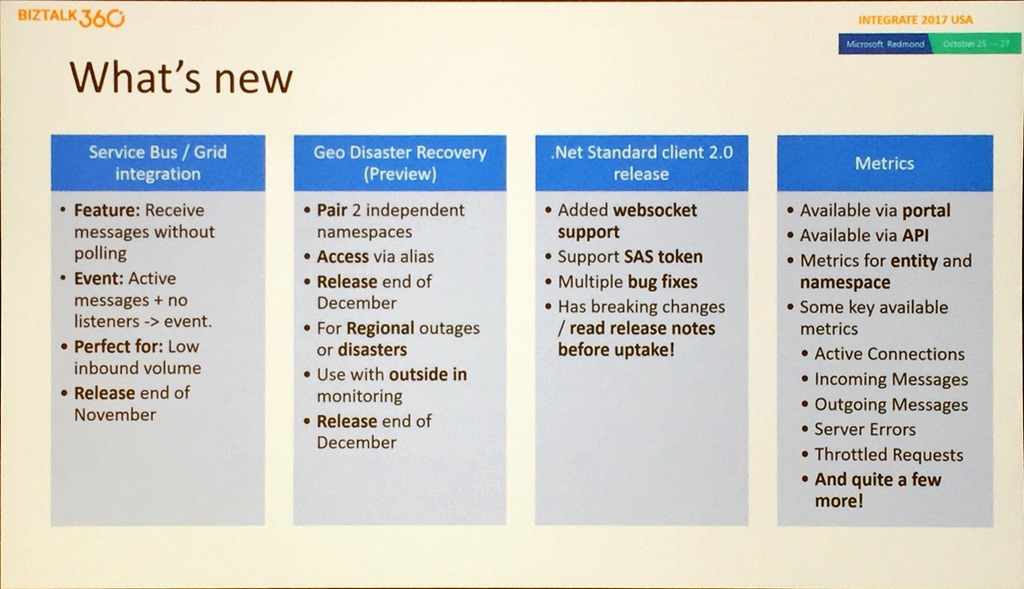

This was a shorter but highly focussed session that started with what is new and soon to be released in Service Bus.

He went through the important points of the slide, including that the Event Grid scenario is for lower volumes and not millions of messages. They are introducing Geo DR for Service Bus that will allow you to pair 2 independent namespaces and access them through an alias. NOTE: In this first release it is only metadata that is failed over between regions, not the data that is on any Service Bus asset.

A good point was made about the .NET Standard Client. It has been breaking changes, so Christian urged anyone wanting to adopt it to spend time in the release notes and testing.

Christian then did a couple of good demos, the first using Service Bus, and Event Grid to simulate Clemens Vasters wanting to buy an airplane (so a likely scenario!), and using Dynamics 365 to react to a new sales opportunity. The second demo showed the Geo DR capabilities and showed that monitoring not entirely straightforward. Christian used Turbo360 (Formerly known as Serverless360) to help drive demo.

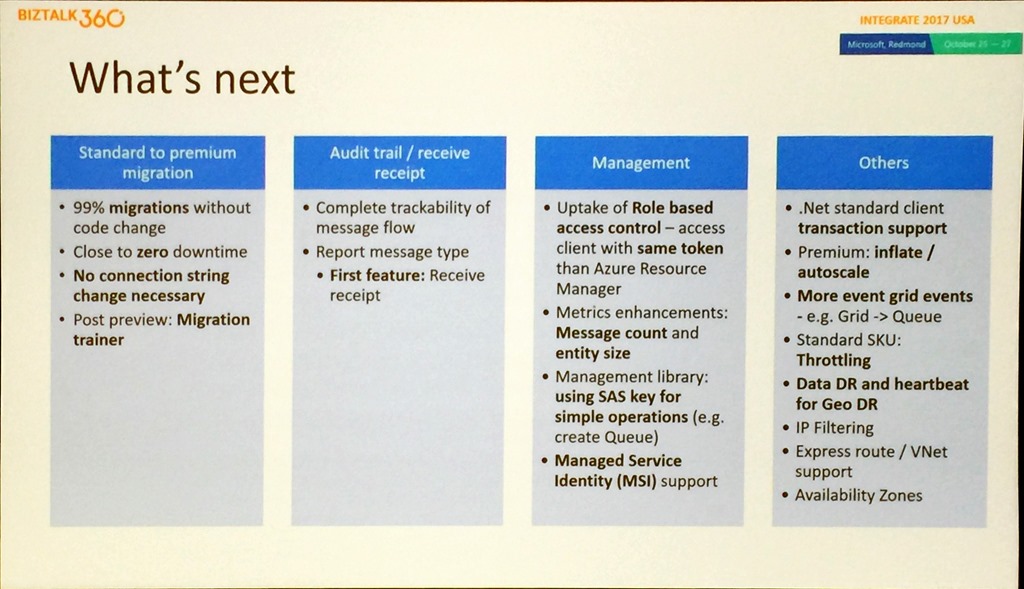

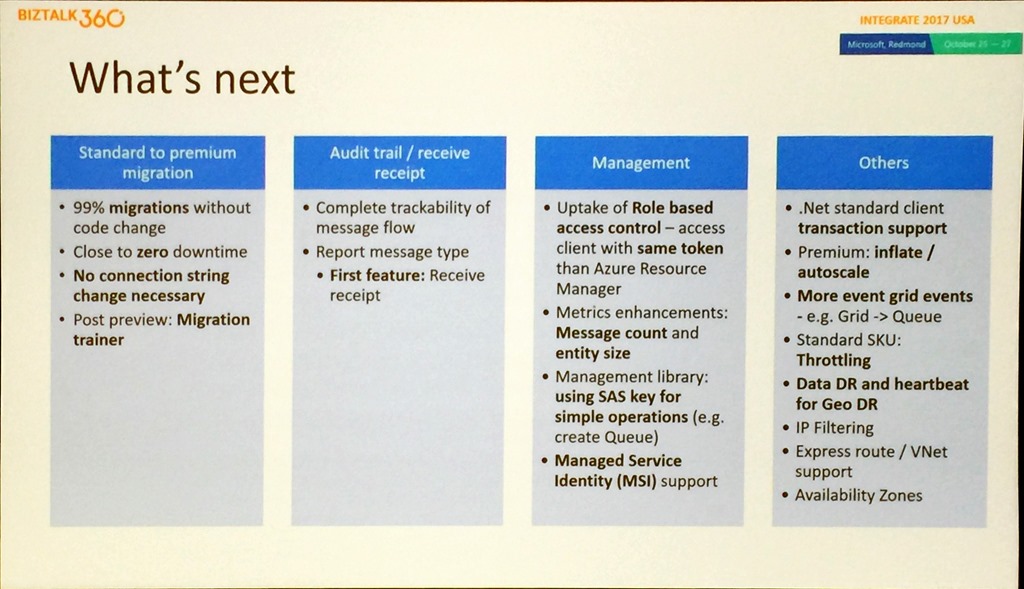

Christian finished with what’s next for Service Bus.

This includes a capability to allow migration between standard and premium SKUs, a new management library, the introduction of throttling in the standard SKU, which is not dedicated, to eliminate noisy neighbours and Data DR as a broader part of the disaster recovery strategy.

This led to the final break of the day, with 2 more presentations standing between attendees and Scott Guthrie’s keynote.

Eduardo Laureano – Azure Functions – Serverless compute in the cloud

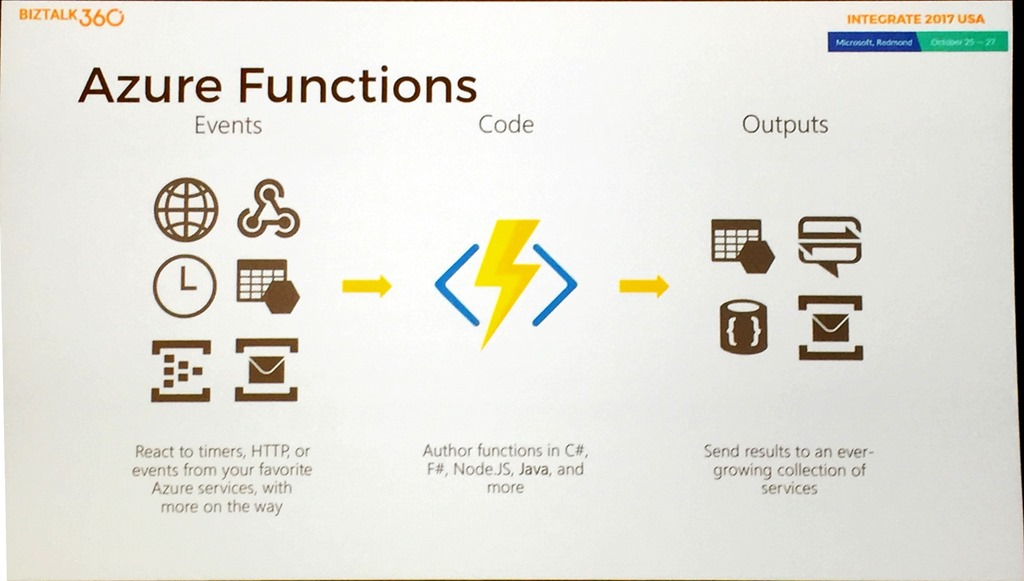

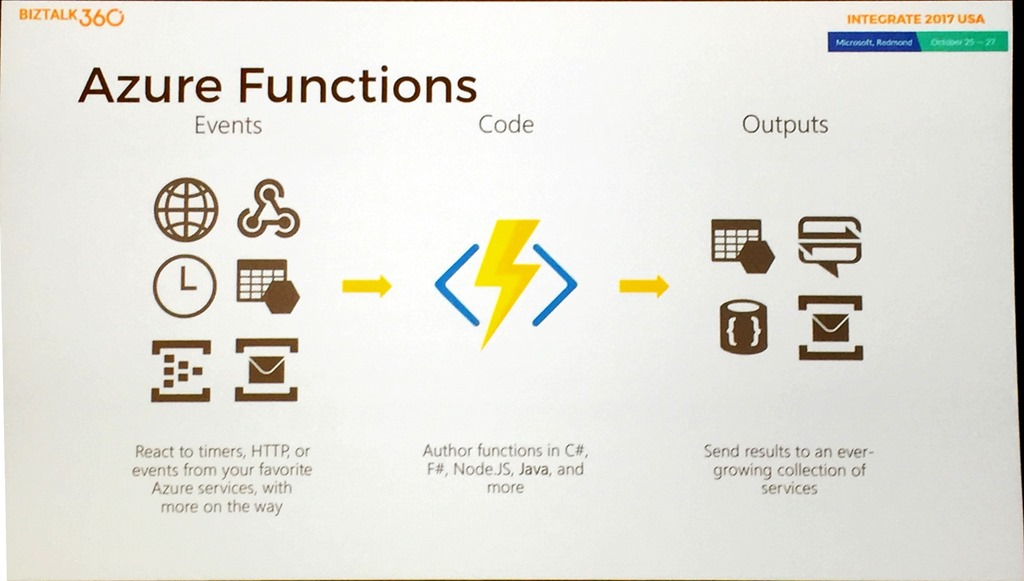

We started with an overview of Functions and the components of the service.

Eduardo explained how Functions evolved, it came from App Service so HTTP has always been a native part of the service.

Eduardo showed the bindings and triggers, ut directed people to the

documentation for an up-to-date list.

Following up with a discussion about developer tooling the discussion then turned to Functions by the numbers. The key takeaway from that was when customers go to Functions they are continuing to move more things over time as they evolve their ecosystems.

Eduardo did a demo that really showed the power of bindings by walking through the Function creation process for a Blob Storage trigger, performing a simple file upload, changing the input from Stream to byte[] and showing that it just still works exactly the same way.

After speaking about the difference between Function bindings and Logic Apps connectors (low code v no code, 23 bindings v 180+ connectors, ideal for data flow v ideal for workflow orchestration, data type in code v fully managed) Eduardo explained that as Functions is open source, anyone can go and create a new custom binding, and that he’d be happy to discuss having more community contributed bindings in the service.

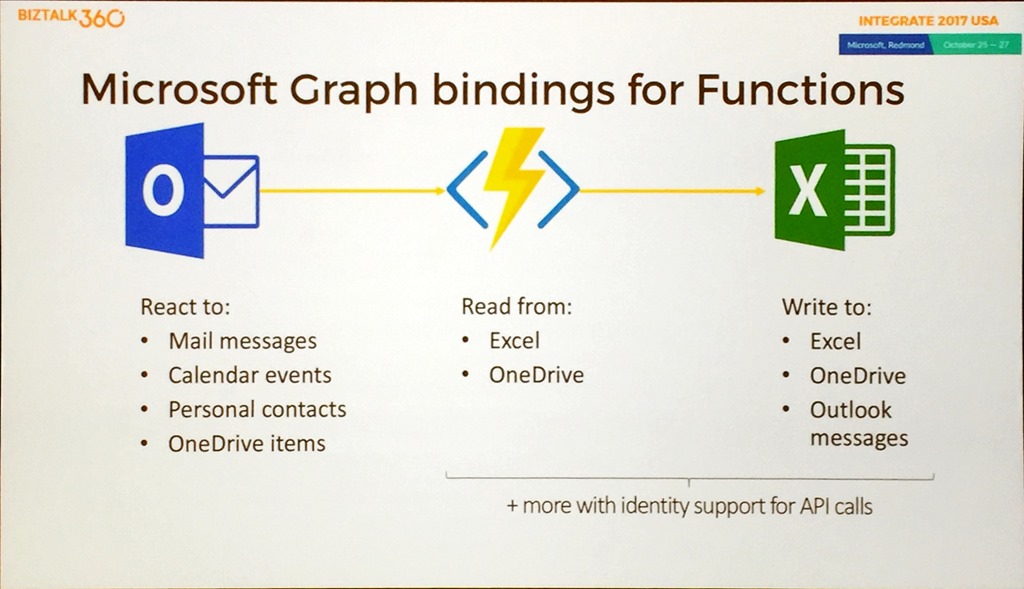

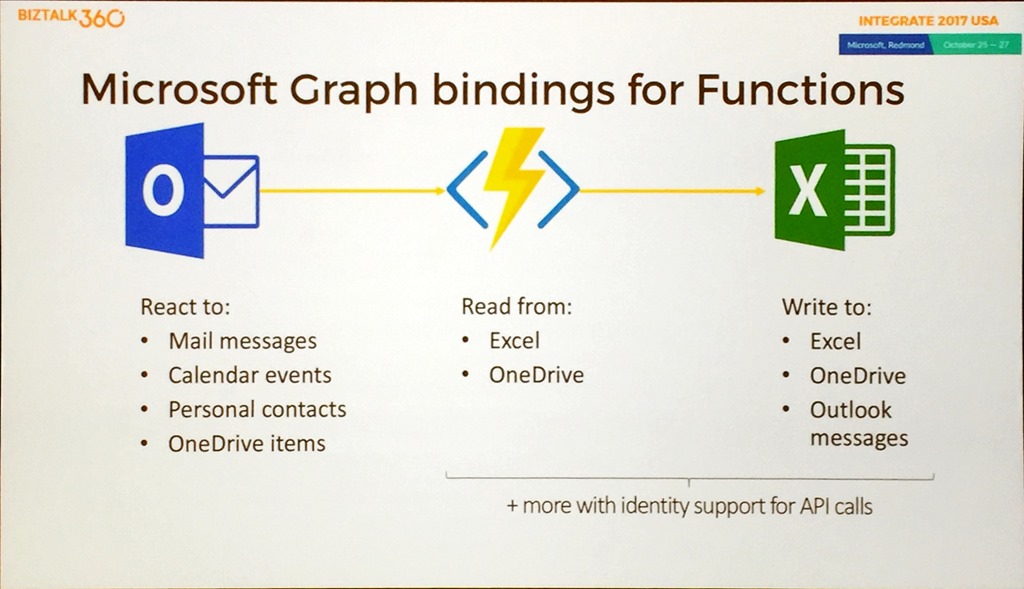

We then moved on to the new Microsoft Graph binding announced at Ignite.

This provides a way of finding correlations across different data sets, but the real magic is that it incorporates identity so you don’t have to.

We had 2 demos, the first showing the Graph binding, and the second showing the new Excel binding with data being added to an Excel file.

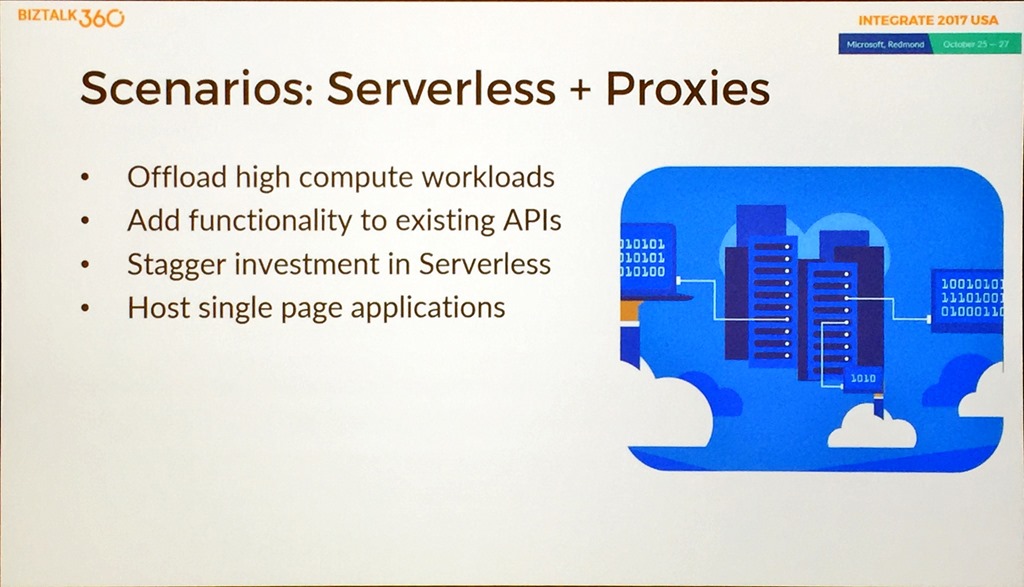

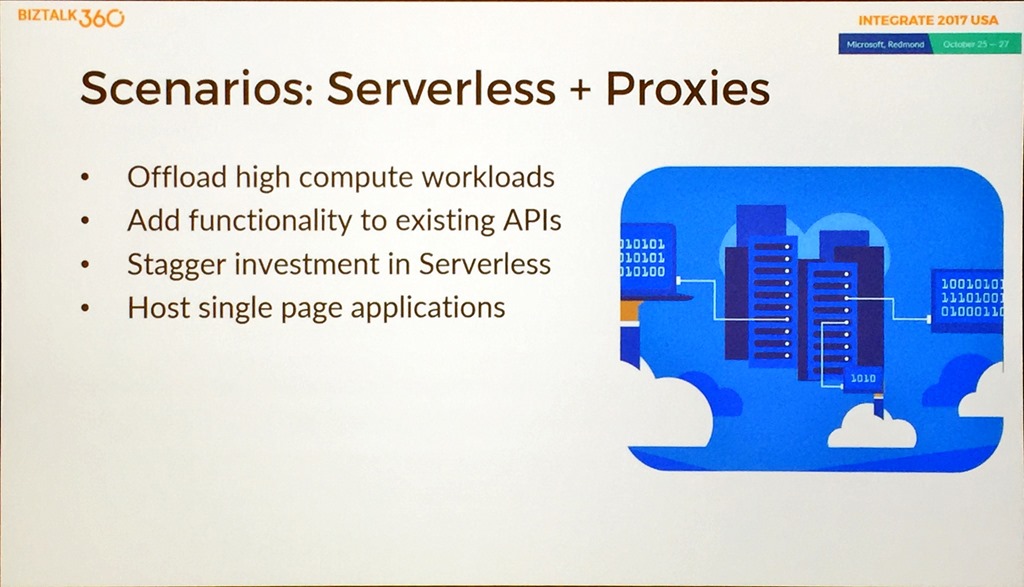

Proxies is a recently added feature that will be going GA soon, so Eduardo spent some time explaining how it works and did a great demo showing how you can use proxies for URL redirection and mocking of responses since you can specify a response payload. He then gave some scenarios where you may want to use proxies.

Like most of the presentations during the day, he finished with a list of takeaways and resources.

The final presentation of the day before Scott was delivered by Jon Fancey.

Jon Fancey – Enterprise Integration with Logic Apps

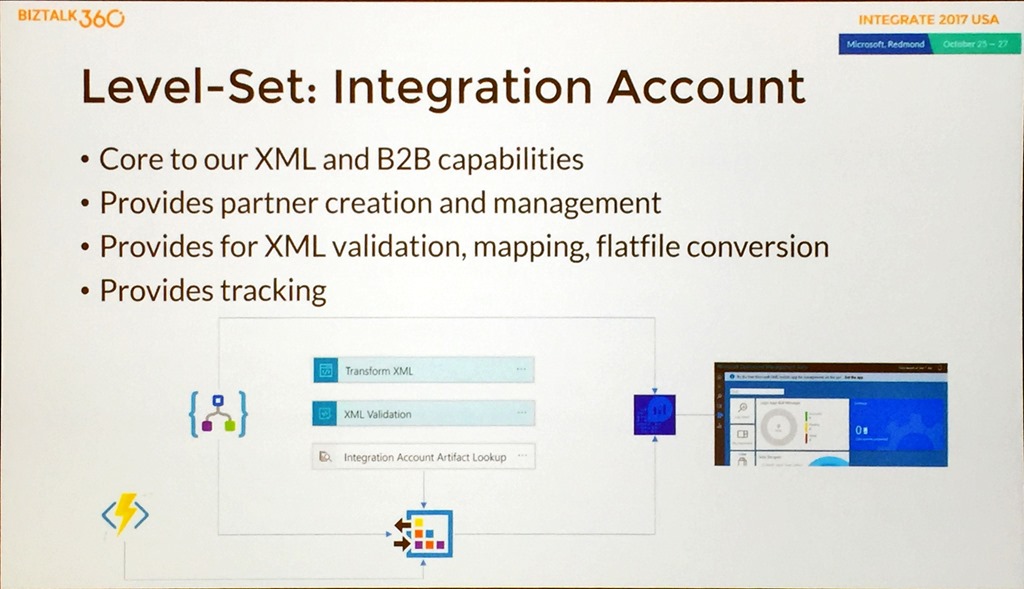

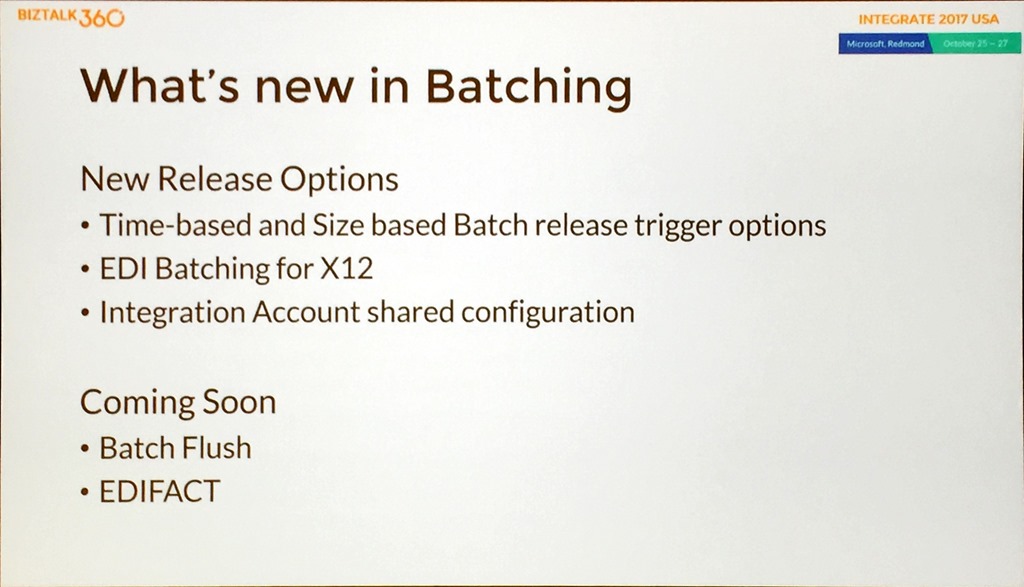

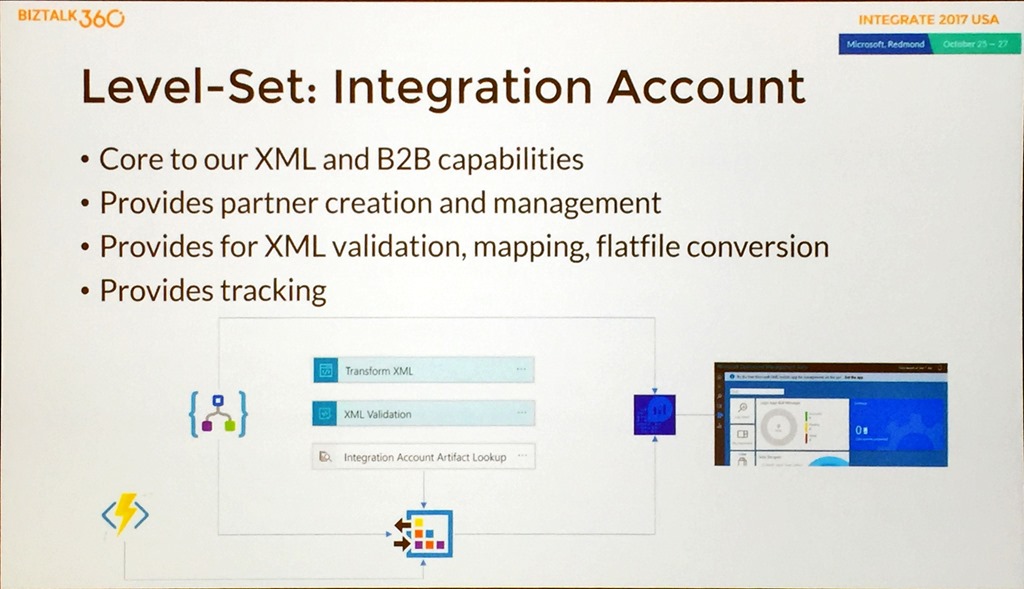

Jon started by level setting and explaining that the Integration Account in Azure is the basic unit of work for Enterprise Integration.

He explained about the XML and B2B capabilities that are provided with the Integration Account and talked about DR scenarios which are important to consider as Integration Accounts hold stateful information. DR is achieved by having a Primary and (multiple) Secondary Integration Accounts in different regions, and the service uses Logic Apps to keep Integration Account states in sync.

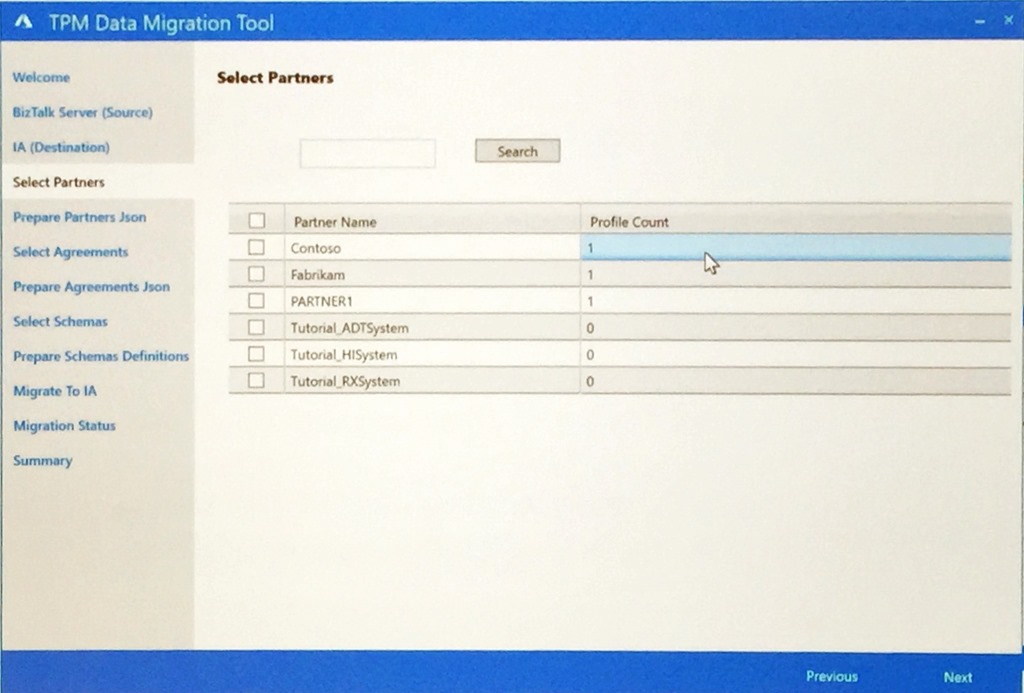

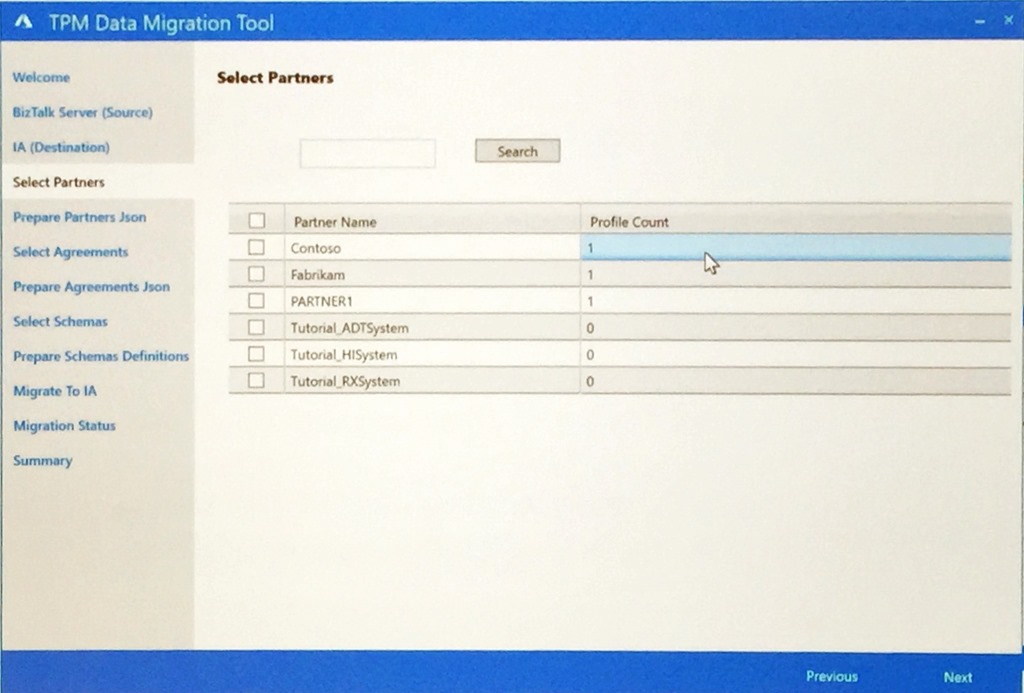

Jon moved on to trading partner migration and a tool (TPM) that has been written to allow customers to easily move trading partners and agreements between BizTalk Server and Logic Apps.

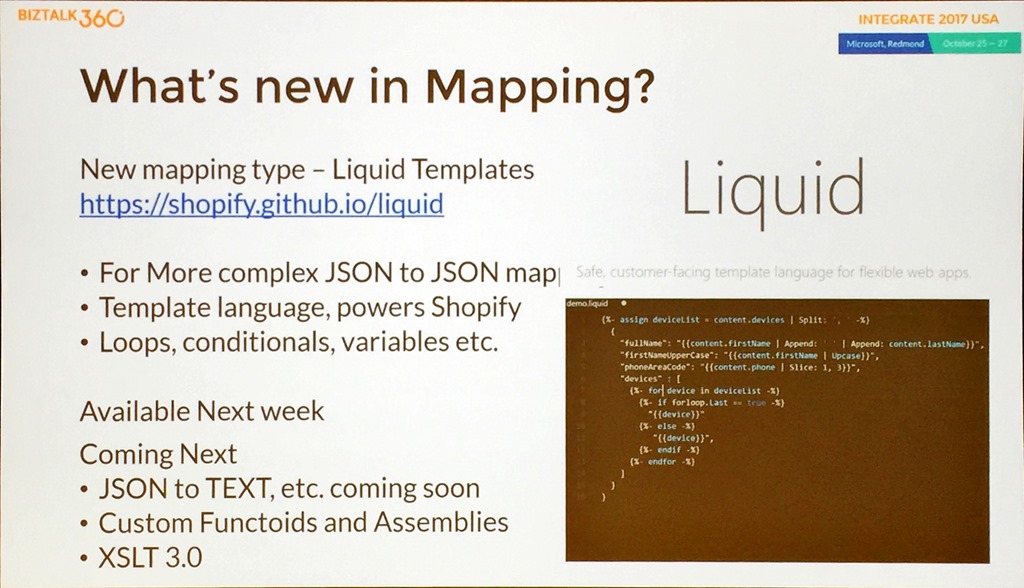

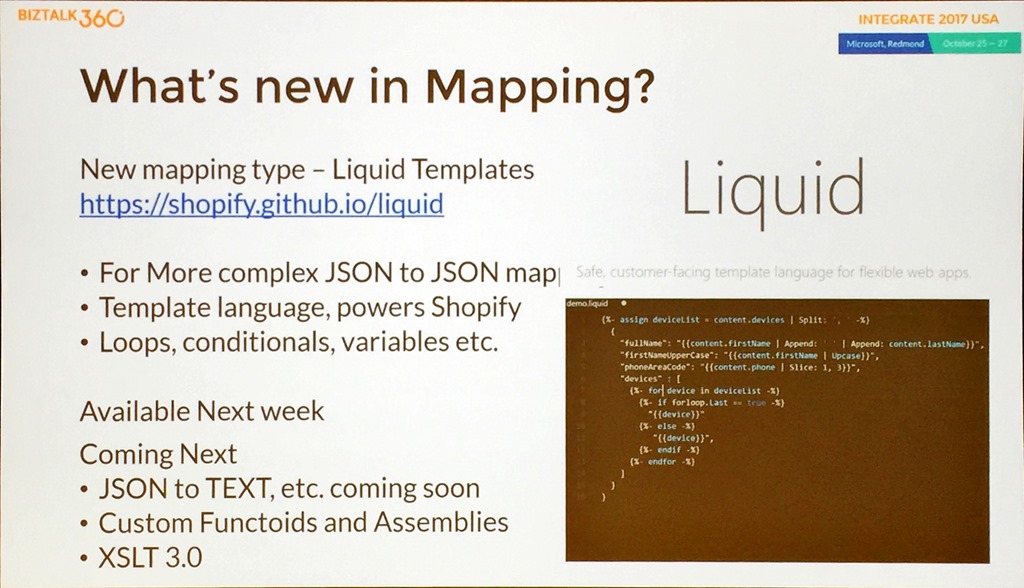

Jon gave an explanation of the traditional VETER pipeline and then moved to what is new in mapping.

With this, he introduced Liquid which allows mapping between different entity types using a DSL and did a demo of it using Visual Studio code.

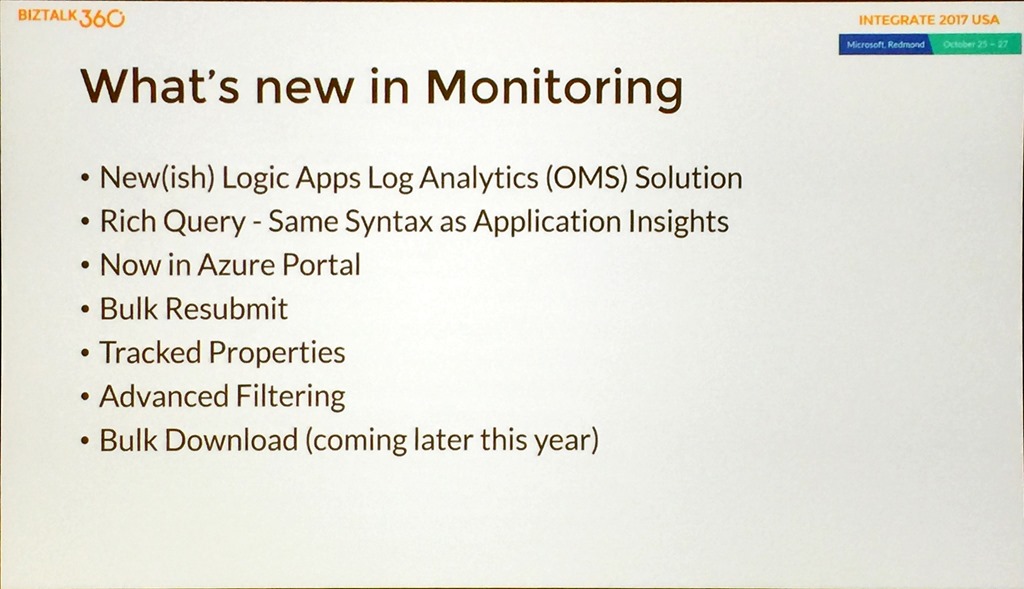

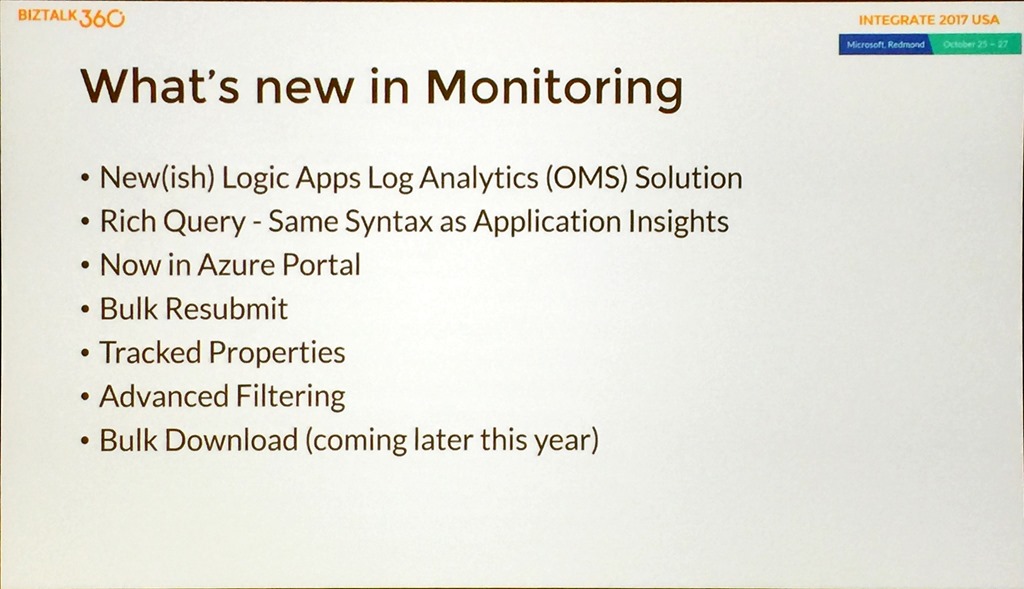

After talking about the tracking features in Logic Apps, Jon gave us a glimpse of what was coming in Monitoring.

Key takeaways from this list are the OMS template and work around harmonizing the querying capabilities to bring it inline with AppInsights.

Jon did a demo to highlight these features showing OMS in the portal, drilling through the data, showing batch resubmit by looking at Runs and selecting, and tracked properties containing your own tracking, then showed taking a Query and creating a custom tile in the OMS workspace.

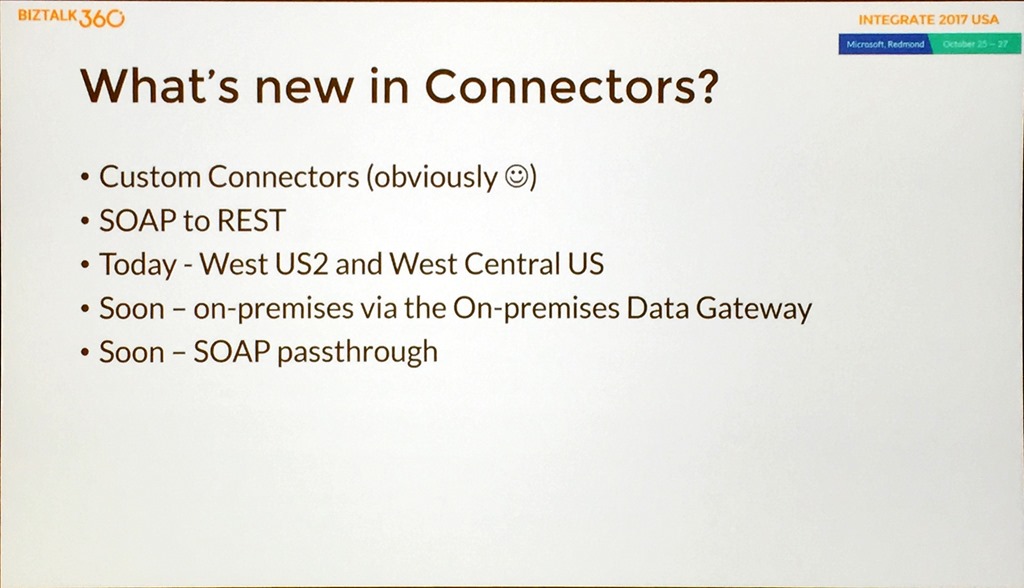

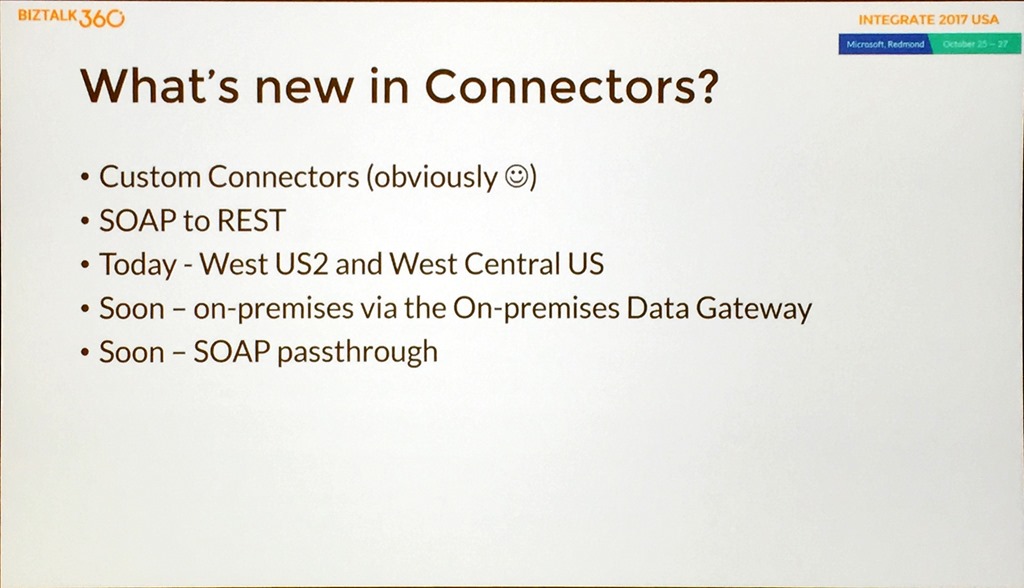

Next up for the “new” treatment was connectors.

There had already been the discussion about custom connectors earlier in the day but it was great to see SOAP to REST, which shipped the same day, to allow even more opportunities to leverage current investments.

Time for another demo, this time looking at SOAP to REST using a custom connector. This was a great demo that involved Jon changing a SOAP app on the fly, adding a new custom connector, then running the service and a great He Man reference, “By the Power of GreySkull”, always a bonus!

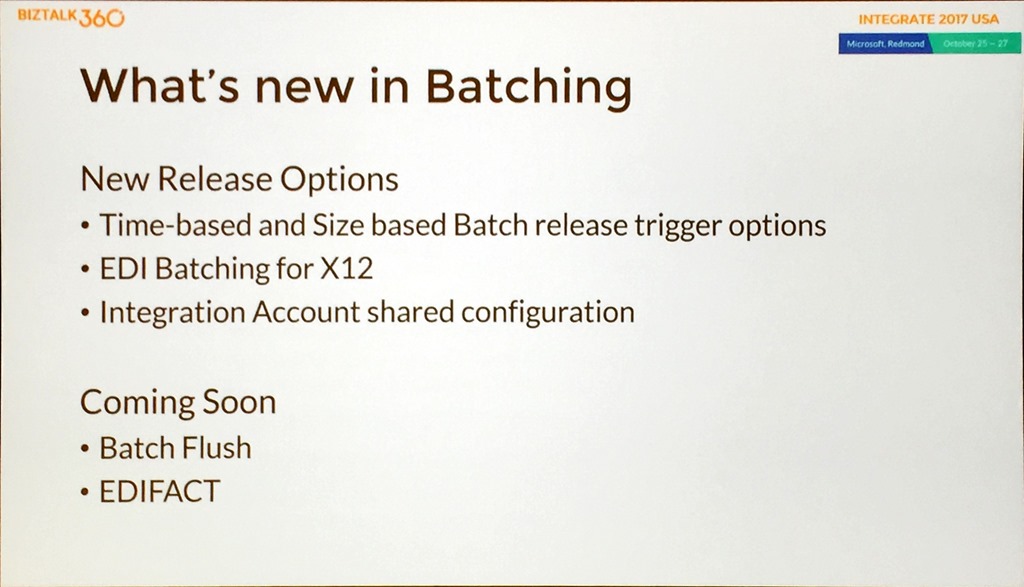

Jon talked about the new batching feature and then gave us a view of what was new and coming.

The last demo of the day showed off the batching feature before Jon did a quick recap and showed some resources.

That was the end of the first day prior to the Keynote, and what a great day it was. There was plenty of information for people who had some knowledge but wanted to learn more and the presenters were very goofing at getting an idea of the level of the audience.

With great demos, great presentations and great presenters the conference got off to a real bang.

The only thing that was left after this was the man in the red polo shirt, but let’s cover that in its

own post!

Check out the recap of the events on

Day 2 and

Day 3 at Integrate 2017 USA.

It’s a good line up with speakers from the Microsoft integration teams and some great community speakers.

There was a shout out for Integration Monday and Middleware Friday, two awesome community efforts supported by Saravana and BizTalk360.

Saravana was followed by Duncan Barker from BizTalk360 who explained that BizTalk360 has now grown to 50 people and spoke about Turbo360 (Formerly known as Serverless360) and how that has grown and continues to be developed.

Duncan also teased about 2 new products that are coming in 2018 so that’s definitely something to look out for and mentioned that work is already underway for Integrate 2018 so watch your mailboxes for more information on that as the plans begin to take shape.

With the introductions and scene setting done, it was time for the leader of the awesome integration team to take the stage.

It’s a good line up with speakers from the Microsoft integration teams and some great community speakers.

There was a shout out for Integration Monday and Middleware Friday, two awesome community efforts supported by Saravana and BizTalk360.

Saravana was followed by Duncan Barker from BizTalk360 who explained that BizTalk360 has now grown to 50 people and spoke about Turbo360 (Formerly known as Serverless360) and how that has grown and continues to be developed.

Duncan also teased about 2 new products that are coming in 2018 so that’s definitely something to look out for and mentioned that work is already underway for Integrate 2018 so watch your mailboxes for more information on that as the plans begin to take shape.

With the introductions and scene setting done, it was time for the leader of the awesome integration team to take the stage.

With over 180 connectors now in Logic Apps, including many that integrate directly with Azure Services, it is possible to more effectively build integration solutions that span on-premises and cloud and really accelerate adoption through hybrid integration, and taking an API first approach is a great way to unlock business value.

Jim then moved on to serverless, a platform that is just there ready for you to use when you need it.

With over 180 connectors now in Logic Apps, including many that integrate directly with Azure Services, it is possible to more effectively build integration solutions that span on-premises and cloud and really accelerate adoption through hybrid integration, and taking an API first approach is a great way to unlock business value.

Jim then moved on to serverless, a platform that is just there ready for you to use when you need it.

With serverless, you get improved build and delivery, reduced time to market and per action billing and it really flips traditional development on its head.

The Pro Integration team has had a busy year, and this was shown in a single slide.

With serverless, you get improved build and delivery, reduced time to market and per action billing and it really flips traditional development on its head.

The Pro Integration team has had a busy year, and this was shown in a single slide.

This shows just how quickly things are changing and evolving and has included things like Logic Apps going GA, feature packs being introduced for BizTalk and API Mocking which has allowed teams to be more agile and progress at greater speed, making it possible to deliver integration solutions in weeks rather than months.

This agility has led to integration getting a seat at the table instead of being an afterthought.

We then had some great demos from Jon Fancey, Kevin Lam and Jeff Hollan who introduced the demo scenario that would be used throughout the conference, Contoso Fitness.

This shows just how quickly things are changing and evolving and has included things like Logic Apps going GA, feature packs being introduced for BizTalk and API Mocking which has allowed teams to be more agile and progress at greater speed, making it possible to deliver integration solutions in weeks rather than months.

This agility has led to integration getting a seat at the table instead of being an afterthought.

We then had some great demos from Jon Fancey, Kevin Lam and Jeff Hollan who introduced the demo scenario that would be used throughout the conference, Contoso Fitness.

Jon kicked off the demos with a Logic App calling Spotify. This allowed him to show the new Custom Connector and a great resource, https://apis.guru/browse-apis/.

Kevin followed up looking at Azure Security Center and showed the tooling that was introduced at Microsoft Ignite recently. This provides integration directly between Azure Security Center and Logic Apps, including playbooks that are templates which integrate directly into typical service management tools such as Service Now.

Jeff did the last demo on Logic Apps and Cognitive Services. This showed the power of using the Video Indexer API and the ability to spin up a Docker container through a connector that will be released shortly. This container used FFMPEG, an open source tool, to take the transcript generated by the indexer and apply the information as subtitles in the video.

We finished with Jim urging everyone to maximise the value of their projects using integration.

Jon kicked off the demos with a Logic App calling Spotify. This allowed him to show the new Custom Connector and a great resource, https://apis.guru/browse-apis/.

Kevin followed up looking at Azure Security Center and showed the tooling that was introduced at Microsoft Ignite recently. This provides integration directly between Azure Security Center and Logic Apps, including playbooks that are templates which integrate directly into typical service management tools such as Service Now.

Jeff did the last demo on Logic Apps and Cognitive Services. This showed the power of using the Video Indexer API and the ability to spin up a Docker container through a connector that will be released shortly. This container used FFMPEG, an open source tool, to take the transcript generated by the indexer and apply the information as subtitles in the video.

We finished with Jim urging everyone to maximise the value of their projects using integration.

Final message:

Final message:

This set the scene for a great presentation and dive into BizTalk and heritage systems. Paul insisted on calling them heritage rather than legacy, as heritage is something you celebrate and love whilst legacy has a number of negative connotations!

Paul again emphasized the importance of hybrid integration between BizTalk and the cloud, and the message really started resonating. He spent some time positioning BizTalk and how it had changed along with Host Integration Server over the years he has been on the team.

For me, his demo involving Contoso Fitness showcasing mobile applications, Logic Apps, virtual machines, HL7 and a mainframe was one of the best of the day. It showcased hybrid integration with the Logic Apps adapter, and the real breadth and depth of the Microsoft integration story.

Paul explained the reasoning behind the Feature Pack releases, how it was able to deliver new value at a quicker cadence by introducing non-breaking changes and he reviewed what had been delivered in Feature Pack 1.

This set the scene for a great presentation and dive into BizTalk and heritage systems. Paul insisted on calling them heritage rather than legacy, as heritage is something you celebrate and love whilst legacy has a number of negative connotations!

Paul again emphasized the importance of hybrid integration between BizTalk and the cloud, and the message really started resonating. He spent some time positioning BizTalk and how it had changed along with Host Integration Server over the years he has been on the team.

For me, his demo involving Contoso Fitness showcasing mobile applications, Logic Apps, virtual machines, HL7 and a mainframe was one of the best of the day. It showcased hybrid integration with the Logic Apps adapter, and the real breadth and depth of the Microsoft integration story.

Paul explained the reasoning behind the Feature Pack releases, how it was able to deliver new value at a quicker cadence by introducing non-breaking changes and he reviewed what had been delivered in Feature Pack 1.

The information was split between Deployment – application lifecycle management; Runtime – advanced scheduling, SQL encryption columns and web admin; and Analytics – AppInsights for tracking and the Power BI template.

He then mentioned that Feature Pack 2 would be released next month!

The information was split between Deployment – application lifecycle management; Runtime – advanced scheduling, SQL encryption columns and web admin; and Analytics – AppInsights for tracking and the Power BI template.

He then mentioned that Feature Pack 2 would be released next month!

Splitting the information the same way we had Deployment – application lifecycle management for multiple servers and backup to Blob Storage; Runtime – Adapter for Service Bus v2, TLS 1.2 (although this may be in the next Cumulative Update as it is a critical update), using API Management to expose Orchestration endpoints, and sending/receiving from Event Hub; Analytics – sending data to Event Hub for tracking.

He walked through the BizTalk Server Support Lifecycle.

Splitting the information the same way we had Deployment – application lifecycle management for multiple servers and backup to Blob Storage; Runtime – Adapter for Service Bus v2, TLS 1.2 (although this may be in the next Cumulative Update as it is a critical update), using API Management to expose Orchestration endpoints, and sending/receiving from Event Hub; Analytics – sending data to Event Hub for tracking.

He walked through the BizTalk Server Support Lifecycle.

This shows that BizTalk Server 2013/2013 R2 is out of mainstream support in 9 months and that people should at least starting thinking about migrating. NOTE: A cool tool to help with this migration was presented by Microsoft IT on Day 2 and is available for use.

The most important slide was the BizTalk Roadmap.

This shows that BizTalk Server 2013/2013 R2 is out of mainstream support in 9 months and that people should at least starting thinking about migrating. NOTE: A cool tool to help with this migration was presented by Microsoft IT on Day 2 and is available for use.

The most important slide was the BizTalk Roadmap.

This clearly shows an ongoing commitment to the product with a timeline for CUs, Feature Packs, and BizTalk vNext.

With that Paul wrapped up we had a break followed by Jeff and Kevin.

This clearly shows an ongoing commitment to the product with a timeline for CUs, Feature Packs, and BizTalk vNext.

With that Paul wrapped up we had a break followed by Jeff and Kevin.

To help emphasize the growth of the service, Kevin mentioned that at GA in June 2016 there were about two dozen connectors, now there are nearly 200!

Connectors provide a canonical form for integration that scale to meets the needs of the customer.

To help emphasize the growth of the service, Kevin mentioned that at GA in June 2016 there were about two dozen connectors, now there are nearly 200!

Connectors provide a canonical form for integration that scale to meets the needs of the customer.

A slide was shown that had an animation of the current connectors that went on for a few pages and included colours to indicate connectors to Azure Services (blue) and those to other Microsoft services (orange), along with a list of others really showing how much coverage Logic Apps has.

One of the new features was shown – custom connectors.

A slide was shown that had an animation of the current connectors that went on for a few pages and included colours to indicate connectors to Azure Services (blue) and those to other Microsoft services (orange), along with a list of others really showing how much coverage Logic Apps has.

One of the new features was shown – custom connectors.

Custom connectors are available now and treated just the same as any other connector, including storing secrets in the Logic Apps secret store just like regular connectors.

These conferences are great on their own, but when the teams share what’s next and any roadmap information it is particularly interesting. With that, we were teased with what connectors and services are coming soon.

Custom connectors are available now and treated just the same as any other connector, including storing secrets in the Logic Apps secret store just like regular connectors.

These conferences are great on their own, but when the teams share what’s next and any roadmap information it is particularly interesting. With that, we were teased with what connectors and services are coming soon.

These include the ability to initialize and destroy containers within the Azure Container Service, Oracle EBS and high availability for the on-premises data gateway. I am particularly interested in the container story and can see this as a great way of running transient compute workloads easily and only when required.

We then moved on to more level setting and to how agile the Logic Apps team is, highlighted by a slide that showed what they have shipped this year, including Visual Studio tooling, nested foreach loops and Ludicrous Mode that allows sharding across the infrastructure to improve performance. Currently, the cadence is roughly a release every two weeks!

To highlight this agility, even more, they showed what was coming soon to the service.

These include the ability to initialize and destroy containers within the Azure Container Service, Oracle EBS and high availability for the on-premises data gateway. I am particularly interested in the container story and can see this as a great way of running transient compute workloads easily and only when required.

We then moved on to more level setting and to how agile the Logic Apps team is, highlighted by a slide that showed what they have shipped this year, including Visual Studio tooling, nested foreach loops and Ludicrous Mode that allows sharding across the infrastructure to improve performance. Currently, the cadence is roughly a release every two weeks!

To highlight this agility, even more, they showed what was coming soon to the service.

Particularly interesting is mocking testing to allow you to stub out connectors that are still being built, being able to resubmit from a failed action rather than an entire run, concurrency control to allow control of how parallel foreach loops run which can be important in ordered delivery scenarios and snippets which allow you to create some reusability across your Logic Apps.

The new pricing model that comes into effect on 1st November was shown. This has a 32x reduction in the cost of native actions, 6.5x reduction in the cost of standard connectors and bringing enterprise connectors inline with other connects based on pay per execution.

Particularly interesting is mocking testing to allow you to stub out connectors that are still being built, being able to resubmit from a failed action rather than an entire run, concurrency control to allow control of how parallel foreach loops run which can be important in ordered delivery scenarios and snippets which allow you to create some reusability across your Logic Apps.

The new pricing model that comes into effect on 1st November was shown. This has a 32x reduction in the cost of native actions, 6.5x reduction in the cost of standard connectors and bringing enterprise connectors inline with other connects based on pay per execution.

The pricing changes also applied to integration account with them coming to a third of their previous price.

With that Jeff wrapped up with another great demo for Contoso Fitness showing how to integrate a Flic button to emulate a customer pushing a button on a fitness machine when it needed maintenance or cleaning, sending an alert via an HTTP trigger to ServiceNow.

We then had a change in presentation order, with Vlad and Miao covering API Management.

The pricing changes also applied to integration account with them coming to a third of their previous price.

With that Jeff wrapped up with another great demo for Contoso Fitness showing how to integrate a Flic button to emulate a customer pushing a button on a fitness machine when it needed maintenance or cleaning, sending an alert via an HTTP trigger to ServiceNow.

We then had a change in presentation order, with Vlad and Miao covering API Management.

He continued by explaining how API Management is positioned and how it can be used to drive loyalty, build new services and channels to market and how it can help cope with multi-speed IT where not every part of a solution or business wants the same pace of change.

He continued by explaining how API Management is positioned and how it can be used to drive loyalty, build new services and channels to market and how it can help cope with multi-speed IT where not every part of a solution or business wants the same pace of change.

Vlad continued with a general overview of policies and how to use them to enforce certain things like access control and rate limits, and how you can chain them together by explaining the scope and the cumulative nature of policies.

Vlad continued with a general overview of policies and how to use them to enforce certain things like access control and rate limits, and how you can chain them together by explaining the scope and the cumulative nature of policies.

After a discussion about security, the conversation moved on to the inclusion of VNets to help control access to on-premises APIs and then multi-region support and scaling that is available as a premium feature. This allows

you to deploy units of scale across regions, includes request caching out of the box, allows incremental growth of APIs, and allows different scales in different regions. It is a great way to grow your APIs as your business grows.

Miao then did a great demo, showing the key features of the service, firstly showing how to create an API, including SOAP to REST to allow more modern access to heritage APIs.

Using the Developer Portal to allow testing of the APIs he showed how to apply a number of policies such as removing headers, replacing backend URLs and rate limiting, followed by using the tracing feature to gain insight into the information passed to and from an API call and what policies are applied.

Any enterprise solution requires in-depth insight, so Miao moved on to monitoring and using Metrics in the Azure Portal to set alerts and using it to call a Logic App followed by the Diagnostic settings and Log Searching.

We then moved to looking at the new Power BI template that can be deployed with a single click.

After a discussion about security, the conversation moved on to the inclusion of VNets to help control access to on-premises APIs and then multi-region support and scaling that is available as a premium feature. This allows

you to deploy units of scale across regions, includes request caching out of the box, allows incremental growth of APIs, and allows different scales in different regions. It is a great way to grow your APIs as your business grows.

Miao then did a great demo, showing the key features of the service, firstly showing how to create an API, including SOAP to REST to allow more modern access to heritage APIs.

Using the Developer Portal to allow testing of the APIs he showed how to apply a number of policies such as removing headers, replacing backend URLs and rate limiting, followed by using the tracing feature to gain insight into the information passed to and from an API call and what policies are applied.

Any enterprise solution requires in-depth insight, so Miao moved on to monitoring and using Metrics in the Azure Portal to set alerts and using it to call a Logic App followed by the Diagnostic settings and Log Searching.

We then moved to looking at the new Power BI template that can be deployed with a single click.

This looks like a great way of delivering insight into an API Management deployment and has been created based on customer asks. It uses Event Hubs, Stream Analytics, and SQL Database.

After a slide that showed the growth in API Management, Vlad then showed how much work has been done in the last 12 months.

This looks like a great way of delivering insight into an API Management deployment and has been created based on customer asks. It uses Event Hubs, Stream Analytics, and SQL Database.

After a slide that showed the growth in API Management, Vlad then showed how much work has been done in the last 12 months.

Like the other presentations, this shows just how agile and engaged the team is and how they are really delivering value to us as users of their service.

With that Vlad provided a list of resources and closed out the morning session.

After lunch, we had 3 presentations on the messaging services within Azure that took proceeding up to the afternoon break.

Like the other presentations, this shows just how agile and engaged the team is and how they are really delivering value to us as users of their service.

With that Vlad provided a list of resources and closed out the morning session.

After lunch, we had 3 presentations on the messaging services within Azure that took proceeding up to the afternoon break.

Using a hammer for illustration, Dan gave a great presentation on where a hammer is a right tool and where a hammer is not. This included an unusual demo that showed how to open a beer with a hammer live on stage!

Using a hammer for illustration, Dan gave a great presentation on where a hammer is a right tool and where a hammer is not. This included an unusual demo that showed how to open a beer with a hammer live on stage!

And really that was the main thrust of the presentation, that with Azure messaging being such a large set of tools, it is important to choose the right tool for the job.

And really that was the main thrust of the presentation, that with Azure messaging being such a large set of tools, it is important to choose the right tool for the job.

To further hammer home the point, he talked about 3 scenarios to fit these tools:

To further hammer home the point, he talked about 3 scenarios to fit these tools:

She moved on to how Event Hub answers all the typical questions asked about big data solutions, such as how do you handle data that has velocity, volume, and variety, can you deal with regional disasters and do real-time streaming as well as batch capture, what can Event Hub integrate with, and how can you handle support. Again, for any production solution, it is important to be able to lift the covers and see what is happening and how a solution is performing.

Shubha did a great demo showing how to use Event Hubs and Event Grid to move stream data into SQL Datawarehouse using the Capture feature of Event Hub that allows you to persist the telemetry data into a storage account. This demo used an Azure Function to react to an Event Grid event that was fired due to a storage file being created to process data into SQL DW.

Leaving the best until last Shubha gave some indication of what was coming soon from the team.

She moved on to how Event Hub answers all the typical questions asked about big data solutions, such as how do you handle data that has velocity, volume, and variety, can you deal with regional disasters and do real-time streaming as well as batch capture, what can Event Hub integrate with, and how can you handle support. Again, for any production solution, it is important to be able to lift the covers and see what is happening and how a solution is performing.

Shubha did a great demo showing how to use Event Hubs and Event Grid to move stream data into SQL Datawarehouse using the Capture feature of Event Hub that allows you to persist the telemetry data into a storage account. This demo used an Azure Function to react to an Event Grid event that was fired due to a storage file being created to process data into SQL DW.

Leaving the best until last Shubha gave some indication of what was coming soon from the team.

This includes the general availability of Geo DR, IP filtering and VNet support and a portal experience for creating dedicated clusters.

We then had a bonus session for the day that was not scheduled.

This includes the general availability of Geo DR, IP filtering and VNet support and a portal experience for creating dedicated clusters.

We then had a bonus session for the day that was not scheduled.

He went through the important points of the slide, including that the Event Grid scenario is for lower volumes and not millions of messages. They are introducing Geo DR for Service Bus that will allow you to pair 2 independent namespaces and access them through an alias. NOTE: In this first release it is only metadata that is failed over between regions, not the data that is on any Service Bus asset.

A good point was made about the .NET Standard Client. It has been breaking changes, so Christian urged anyone wanting to adopt it to spend time in the release notes and testing.

Christian then did a couple of good demos, the first using Service Bus, and Event Grid to simulate Clemens Vasters wanting to buy an airplane (so a likely scenario!), and using Dynamics 365 to react to a new sales opportunity. The second demo showed the Geo DR capabilities and showed that monitoring not entirely straightforward. Christian used Turbo360 (Formerly known as Serverless360) to help drive demo.

Christian finished with what’s next for Service Bus.

He went through the important points of the slide, including that the Event Grid scenario is for lower volumes and not millions of messages. They are introducing Geo DR for Service Bus that will allow you to pair 2 independent namespaces and access them through an alias. NOTE: In this first release it is only metadata that is failed over between regions, not the data that is on any Service Bus asset.

A good point was made about the .NET Standard Client. It has been breaking changes, so Christian urged anyone wanting to adopt it to spend time in the release notes and testing.

Christian then did a couple of good demos, the first using Service Bus, and Event Grid to simulate Clemens Vasters wanting to buy an airplane (so a likely scenario!), and using Dynamics 365 to react to a new sales opportunity. The second demo showed the Geo DR capabilities and showed that monitoring not entirely straightforward. Christian used Turbo360 (Formerly known as Serverless360) to help drive demo.

Christian finished with what’s next for Service Bus.

This includes a capability to allow migration between standard and premium SKUs, a new management library, the introduction of throttling in the standard SKU, which is not dedicated, to eliminate noisy neighbours and Data DR as a broader part of the disaster recovery strategy.

This led to the final break of the day, with 2 more presentations standing between attendees and Scott Guthrie’s keynote.

This includes a capability to allow migration between standard and premium SKUs, a new management library, the introduction of throttling in the standard SKU, which is not dedicated, to eliminate noisy neighbours and Data DR as a broader part of the disaster recovery strategy.

This led to the final break of the day, with 2 more presentations standing between attendees and Scott Guthrie’s keynote.

Eduardo explained how Functions evolved, it came from App Service so HTTP has always been a native part of the service.

Eduardo showed the bindings and triggers, ut directed people to the documentation for an up-to-date list.

Following up with a discussion about developer tooling the discussion then turned to Functions by the numbers. The key takeaway from that was when customers go to Functions they are continuing to move more things over time as they evolve their ecosystems.

Eduardo did a demo that really showed the power of bindings by walking through the Function creation process for a Blob Storage trigger, performing a simple file upload, changing the input from Stream to byte[] and showing that it just still works exactly the same way.

After speaking about the difference between Function bindings and Logic Apps connectors (low code v no code, 23 bindings v 180+ connectors, ideal for data flow v ideal for workflow orchestration, data type in code v fully managed) Eduardo explained that as Functions is open source, anyone can go and create a new custom binding, and that he’d be happy to discuss having more community contributed bindings in the service.

We then moved on to the new Microsoft Graph binding announced at Ignite.

Eduardo explained how Functions evolved, it came from App Service so HTTP has always been a native part of the service.

Eduardo showed the bindings and triggers, ut directed people to the documentation for an up-to-date list.

Following up with a discussion about developer tooling the discussion then turned to Functions by the numbers. The key takeaway from that was when customers go to Functions they are continuing to move more things over time as they evolve their ecosystems.

Eduardo did a demo that really showed the power of bindings by walking through the Function creation process for a Blob Storage trigger, performing a simple file upload, changing the input from Stream to byte[] and showing that it just still works exactly the same way.

After speaking about the difference between Function bindings and Logic Apps connectors (low code v no code, 23 bindings v 180+ connectors, ideal for data flow v ideal for workflow orchestration, data type in code v fully managed) Eduardo explained that as Functions is open source, anyone can go and create a new custom binding, and that he’d be happy to discuss having more community contributed bindings in the service.

We then moved on to the new Microsoft Graph binding announced at Ignite.

This provides a way of finding correlations across different data sets, but the real magic is that it incorporates identity so you don’t have to.

We had 2 demos, the first showing the Graph binding, and the second showing the new Excel binding with data being added to an Excel file.

Proxies is a recently added feature that will be going GA soon, so Eduardo spent some time explaining how it works and did a great demo showing how you can use proxies for URL redirection and mocking of responses since you can specify a response payload. He then gave some scenarios where you may want to use proxies.

This provides a way of finding correlations across different data sets, but the real magic is that it incorporates identity so you don’t have to.

We had 2 demos, the first showing the Graph binding, and the second showing the new Excel binding with data being added to an Excel file.

Proxies is a recently added feature that will be going GA soon, so Eduardo spent some time explaining how it works and did a great demo showing how you can use proxies for URL redirection and mocking of responses since you can specify a response payload. He then gave some scenarios where you may want to use proxies.

Like most of the presentations during the day, he finished with a list of takeaways and resources.

The final presentation of the day before Scott was delivered by Jon Fancey.

Like most of the presentations during the day, he finished with a list of takeaways and resources.

The final presentation of the day before Scott was delivered by Jon Fancey.

He explained about the XML and B2B capabilities that are provided with the Integration Account and talked about DR scenarios which are important to consider as Integration Accounts hold stateful information. DR is achieved by having a Primary and (multiple) Secondary Integration Accounts in different regions, and the service uses Logic Apps to keep Integration Account states in sync.

Jon moved on to trading partner migration and a tool (TPM) that has been written to allow customers to easily move trading partners and agreements between BizTalk Server and Logic Apps.

He explained about the XML and B2B capabilities that are provided with the Integration Account and talked about DR scenarios which are important to consider as Integration Accounts hold stateful information. DR is achieved by having a Primary and (multiple) Secondary Integration Accounts in different regions, and the service uses Logic Apps to keep Integration Account states in sync.

Jon moved on to trading partner migration and a tool (TPM) that has been written to allow customers to easily move trading partners and agreements between BizTalk Server and Logic Apps.

Jon gave an explanation of the traditional VETER pipeline and then moved to what is new in mapping.

Jon gave an explanation of the traditional VETER pipeline and then moved to what is new in mapping.

With this, he introduced Liquid which allows mapping between different entity types using a DSL and did a demo of it using Visual Studio code.

After talking about the tracking features in Logic Apps, Jon gave us a glimpse of what was coming in Monitoring.

With this, he introduced Liquid which allows mapping between different entity types using a DSL and did a demo of it using Visual Studio code.

After talking about the tracking features in Logic Apps, Jon gave us a glimpse of what was coming in Monitoring.

Key takeaways from this list are the OMS template and work around harmonizing the querying capabilities to bring it inline with AppInsights.

Jon did a demo to highlight these features showing OMS in the portal, drilling through the data, showing batch resubmit by looking at Runs and selecting, and tracked properties containing your own tracking, then showed taking a Query and creating a custom tile in the OMS workspace.

Next up for the “new” treatment was connectors.

Key takeaways from this list are the OMS template and work around harmonizing the querying capabilities to bring it inline with AppInsights.

Jon did a demo to highlight these features showing OMS in the portal, drilling through the data, showing batch resubmit by looking at Runs and selecting, and tracked properties containing your own tracking, then showed taking a Query and creating a custom tile in the OMS workspace.

Next up for the “new” treatment was connectors.

There had already been the discussion about custom connectors earlier in the day but it was great to see SOAP to REST, which shipped the same day, to allow even more opportunities to leverage current investments.

Time for another demo, this time looking at SOAP to REST using a custom connector. This was a great demo that involved Jon changing a SOAP app on the fly, adding a new custom connector, then running the service and a great He Man reference, “By the Power of GreySkull”, always a bonus!

Jon talked about the new batching feature and then gave us a view of what was new and coming.

There had already been the discussion about custom connectors earlier in the day but it was great to see SOAP to REST, which shipped the same day, to allow even more opportunities to leverage current investments.

Time for another demo, this time looking at SOAP to REST using a custom connector. This was a great demo that involved Jon changing a SOAP app on the fly, adding a new custom connector, then running the service and a great He Man reference, “By the Power of GreySkull”, always a bonus!

Jon talked about the new batching feature and then gave us a view of what was new and coming.

The last demo of the day showed off the batching feature before Jon did a quick recap and showed some resources.

That was the end of the first day prior to the Keynote, and what a great day it was. There was plenty of information for people who had some knowledge but wanted to learn more and the presenters were very goofing at getting an idea of the level of the audience.

With great demos, great presentations and great presenters the conference got off to a real bang.

The only thing that was left after this was the man in the red polo shirt, but let’s cover that in its own post!

Check out the recap of the events on Day 2 and Day 3 at Integrate 2017 USA.

The last demo of the day showed off the batching feature before Jon did a quick recap and showed some resources.

That was the end of the first day prior to the Keynote, and what a great day it was. There was plenty of information for people who had some knowledge but wanted to learn more and the presenters were very goofing at getting an idea of the level of the audience.

With great demos, great presentations and great presenters the conference got off to a real bang.

The only thing that was left after this was the man in the red polo shirt, but let’s cover that in its own post!

Check out the recap of the events on Day 2 and Day 3 at Integrate 2017 USA.